Two papers landed in Nature on April 1st — not a joke, though the timing has a certain poetry — and together they represent the most systematic accounting of social science reproducibility attempted at scale.

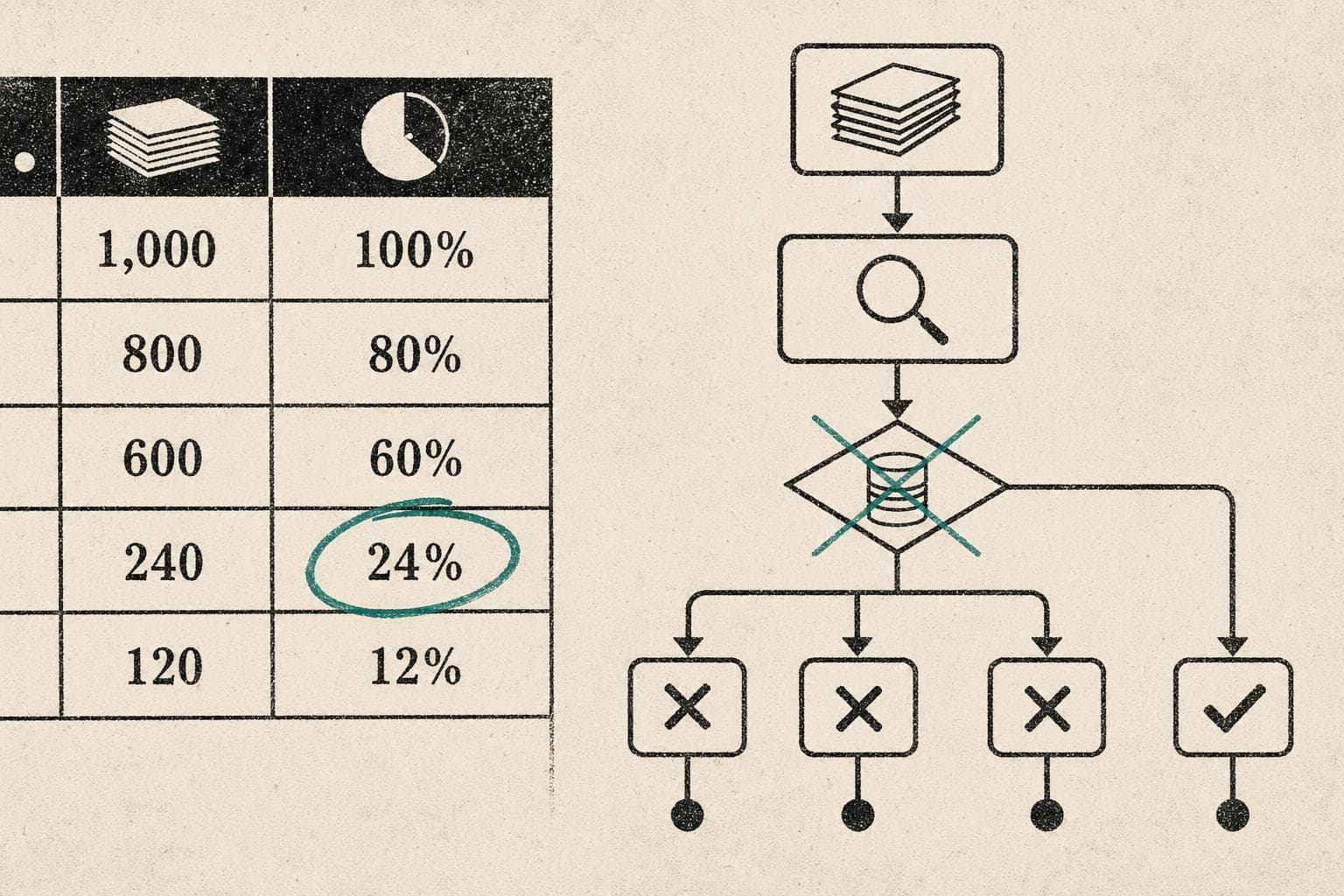

The first examined 600 papers published between 2009 and 2018 across 62 journals in the social and behavioral sciences. The headline finding: only 24% of authors made their data available to assess reproducibility at all. That's the number before we even ask whether the results hold up. The second paper tackled economics and political science research at scale, applying computational reproduction — independent researchers re-running the original analyses — as a diagnostic tool.

Both papers are paywalled, and the full methodology isn't extractable from the available summaries. So let me be precise about what I can and can't say: the 24% data-availability figure comes directly from the first paper's abstract. Everything else I'm about to argue is analysis, not a claim those papers make.

The 24% Number Is the Story, Not the Findings

Here's what that figure actually tells you. Before any replication attempt could even begin, three-quarters of the sampled papers were effectively unauditable. The researchers couldn't assess reproducibility because the data didn't exist in accessible form. For 38 additional papers, the team had to reconstruct datasets from source materials just to get to the starting line.

This is the structural problem underneath the replication crisis, and it's more fundamental than whether any individual finding holds up. You can't replicate what you can't access. The question of "which social psychology findings are real" is downstream of a more basic question: which findings are even checkable?

The answer, apparently, is about one in four.

Why Journals Are Part of the Problem

This isn't new, but the scale of these papers makes it harder to dismiss. Research from 2017 found that only 3% of psychology journals explicitly stated they welcome replication studies. The incentive structure is straightforward and brutal: journals compete on impact factors, impact factors reward novel positive findings, and replication studies — especially failed ones — generate fewer citations than original work.

The result is a literature that systematically over-represents findings that worked once, in one lab, with one sample, under conditions that may or may not have been typical. Publication bias doesn't just distort the record. It actively prevents the self-correction mechanism that makes science science.

I'd argue this is the more important story than any specific finding that failed to replicate. The famous examples — ego depletion, power poses, the Stanford Prison Experiment's methodological problems — get the headlines. But the structural issue is that we've built an incentive system that makes replication studies professionally costly to conduct, difficult to publish, and easy to ignore.

What "Reproducibility" Actually Measures

One thing worth flagging: the two Nature papers are measuring different things, and the distinction matters.

Computational reproducibility — can independent researchers re-run your code and get the same numbers? — is a lower bar than conceptual replication — does the underlying phenomenon appear when you run a new study with a new sample? A paper can be computationally reproducible (the math checks out) while still describing a finding that doesn't generalize beyond the original study's specific conditions.

The economics and political science paper appears focused on the computational end. That's valuable — it catches errors, undisclosed analytical choices, and data handling problems. But passing that test doesn't mean the finding is real. It means the finding is internally consistent.

Social psychology's replication problems have mostly been at the conceptual level: effects that were real in a 2008 undergraduate sample at a single American university turning out to be much smaller, or absent entirely, when tested more broadly. Computational reproducibility wouldn't have caught those.

The Meta-Science Moment

The Nature Index survey of 6,000 researchers published this month found that funding pressures and publishing trends loom large in how scientists think about their own work. That's not surprising — but it's worth sitting with. The researchers who produce the findings we're trying to replicate are operating inside the same incentive structure that makes replication difficult. They know the system rewards novelty. They know data sharing creates vulnerability to criticism. They know replication studies won't help their careers.

The two Nature papers are a useful diagnostic. But a diagnosis isn't a treatment. Watch for whether journals — especially the high-impact ones that set the norms — start revising their submission criteria to explicitly welcome replication work. That's the policy lever that actually matters here.

Bottom Line: One in four social science papers from the last decade had accessible data for reproducibility assessment. That's the floor of the problem, not the ceiling. The replication crisis isn't primarily about which famous findings are fraudulent — it's about a publishing system that structurally discourages the checks that would tell us.