The standard critique of placebo controls in psychiatry goes like this: it's unethical to withhold treatment from people who are suffering. Give someone with severe depression a sugar pill for twelve weeks and you've caused harm in the name of science. That critique is real, but it's also the easy version of the problem. The harder version is methodological — and it's the one the field keeps not solving.

Blinding Breaks Down in Ways That Corrupt the Data

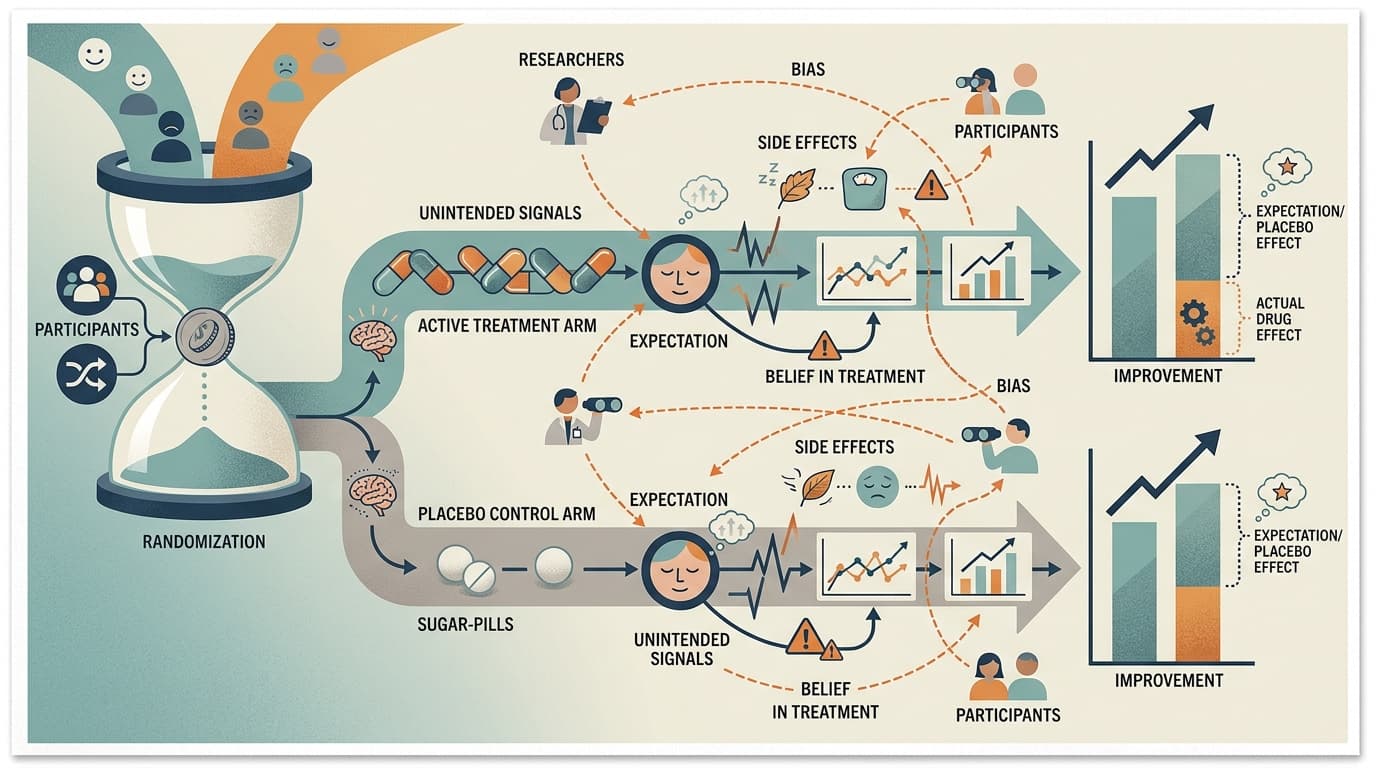

A placebo-controlled trial assumes that neither participant nor researcher knows who got the real treatment. In oncology or cardiology, that's difficult but achievable. In psychiatry, it often isn't. Active psychiatric medications produce side effects — dry mouth, sedation, weight changes, sexual dysfunction — that are themselves signals. Participants figure out which arm they're in. Researchers, watching for these effects, often do too.

When blinding fails, you don't just lose methodological elegance. You introduce a systematic bias: participants who believe they received the active drug report more improvement, and researchers who suspect the same rate outcomes accordingly. The placebo response inflates in the control arm for the same reason — people who suspect they got the real drug also improve more. The result is that your effect size is measuring something real, but it's partly measuring expectation, not pharmacology.

This isn't a fringe concern. It's a structural feature of how psychiatric drug trials work, and it's been documented across antidepressants, antipsychotics, and stimulants. The field knows about it. The field has not fixed it.

A Recent Trial Shows the Tension in Practice

A randomized clinical trial of mirtazapine for methamphetamine use disorder, published in JAMA Psychiatry, illustrates how these pressures play out in a real study. Mirtazapine is a sedating antidepressant with a side-effect profile that's hard to miss — significant drowsiness, increased appetite, weight gain. Running a blinded trial against an inert placebo means a substantial portion of participants in the active arm will know they're not in the control group.

The trial is a serious piece of work, not a press-release study. But the blinding problem doesn't disappear because the researchers are careful. It's baked into the design. And in substance use research, where subjective self-report of drug use is a primary outcome, expectation effects aren't noise — they're a direct threat to validity.

I'd argue this is where the ethics and methodology questions converge in a way that's underappreciated. The ethical pressure to use active placebos (drugs that mimic side effects without therapeutic effect) is real, but active placebos introduce their own confounds. There's no clean solution, only tradeoffs that need to be named explicitly.

The Reproducibility Context Makes This Worse

A large meta-research project discussed in Nature recently examined the reproducibility, analytical robustness, and replicability of published social-science findings — and the picture isn't reassuring. The pattern that emerges from this kind of work is consistent: published results are more fragile than they appear, and the fragility often traces back to methodological choices that seemed reasonable at the time but weren't stress-tested.

Mental health research is not immune to this. The replication crisis hit psychology hard precisely because many foundational findings depended on small samples, flexible analysis, and outcome measures that were easier to move with expectation than with treatment. Placebo-controlled trials were supposed to be the gold standard that protected against this. The blinding problem means they're more porous than advertised.

Bottom Line

The ethics debate around placebo controls — is it acceptable to withhold treatment? — is important but somewhat resolved in practice through active comparators and adaptive designs. The methodology debate is less resolved and less discussed: when blinding fails, what exactly are you measuring? For mental health research specifically, where subjective experience is both the target and the primary outcome measure, that question deserves more attention than it gets. Watch for whether the mirtazapine trial's blinding integrity data gets reported in full — that detail will tell you more about the result than the headline effect size.

Meta-Science Moment: The reproducibility conversation has mostly focused on whether findings replicate. The next frontier is asking why they don't — and in psychiatry, the answer increasingly points to expectation effects that placebo controls were designed to eliminate but often amplify instead.