There's a counterintuitive finding buried in a recent arXiv preprint that deserves more attention than it's likely to get: the papers that receive the harshest peer review tend to become the most cited.

Not the smoothest ones. Not the ones reviewers waved through. The ones that got roughed up.

Harder Review, Higher Impact — But Read That Carefully

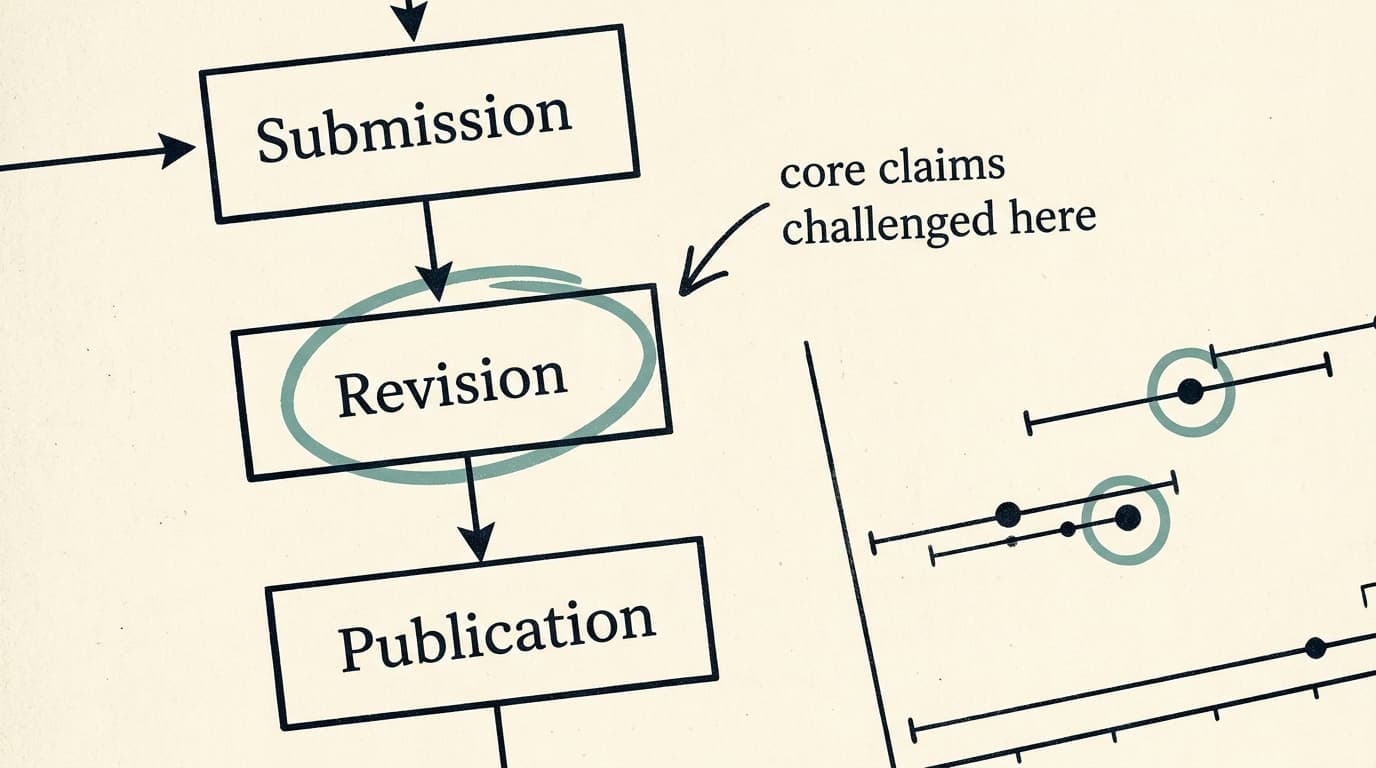

The study, posted earlier this month, analyzed peer review correspondence from Nature Communications papers published between 2017 and 2024, using a large language model pipeline to convert unstructured reviewer-author exchanges into structured data. The researchers found that review pressure concentrates in the first round and focuses disproportionately on core claims rather than presentation. More importantly, they found that stronger criticism, higher-quality reviewer comments, and greater revision burden are all associated with higher later citation impact among accepted papers.

Pause on that framing: among accepted papers. This is a crucial methodological constraint. The study can only observe papers that made it through — it tells us nothing about the brilliant work that got rejected, or the mediocre work that slipped past tired reviewers. Selection effects are doing real work here, and the authors are appropriately careful about this. But the finding still cuts against a common complaint: that peer review is mostly gatekeeping theater that slows down good science without improving it.

The data suggests otherwise. Demanding review appears to be a feature, not a bug — at least when it's focused on substance. The study also found that reviewer disagreement increases with the average strength of opinions, which is a polite way of saying: the more a paper matters, the more reviewers fight about it. Fields differ more in review style than review length, pointing to disciplinary cultures around how criticism gets negotiated rather than how much of it gets produced.

What "Demanding" Actually Means

Here's where I'd push back slightly on how this finding might get reported elsewhere. "Demanding peer review" is not the same as slow peer review, or hostile peer review, or review that demands authors cite the reviewer's own papers (a practice that exists and is not subtle). The study is measuring something specific: the quality and depth of criticism directed at core scientific claims, and the extent of revision that follows.

That's a meaningful distinction. A paper can sit in review for eighteen months because a journal is understaffed, or because one reviewer keeps asking for additional experiments that don't materially change the conclusions. That's not demanding review in the sense this study means — that's friction without signal. The finding here is about substantive challenge producing substantive revision, which then produces work that the field actually uses.

The ReviewBench preprint posted around the same time gestures at the same underlying problem from a different angle: manuscript volume is rising faster than the available pool of expert reviewers, and AI tools are being tested as a response. Whether AI review can replicate the kind of substantive challenge this study associates with impact is, to put it gently, an open question.

The Meta-Science Moment: What Gets Measured Gets Managed

Citation impact is a deeply imperfect proxy for scientific quality, and the authors of the arXiv study know this. Citations measure influence, not truth. A paper can be highly cited because it's wrong in an interesting way, or because it established a method that everyone uses while quietly ignoring its limitations.

But there's something worth sitting with here: if demanding review predicts citation impact, and citation impact is what universities and funding bodies use to evaluate researchers, then there's an institutional argument — not just a scientific one — for protecting rigorous review processes from the pressure to accelerate. The push to make peer review faster is real and not entirely misguided. But speed and rigor are in tension, and this study suggests the tension isn't symmetric. Faster review that sacrifices substantive challenge may be producing papers that feel published but aren't really tested.

Elisabeth Bik's decade of image-duplication work, recently revisited by Retraction Watch, is a useful counterpoint. Her original preprint was rejected four or five times before she posted it directly. The review process failed to recognize one of the most consequential papers in scientific integrity research. Demanding review is valuable. Demanding review that's also accurate is rarer.

Bottom Line

Good peer review isn't just a filter — it's a forge. The arXiv data suggests that papers pushed hardest on their core claims come out stronger, and the field notices. The implication isn't that we should make review slower or more punishing for its own sake. It's that the substance of criticism matters more than its volume or its speed. Watch for whether this finding gets picked up in the ongoing policy debates around AI-assisted review — because "faster" and "more demanding" are not the same thing, and conflating them would be a costly mistake.