The average paper accepted by Nature spends somewhere between 8 and 14 months in the pipeline from submission to publication. That's not a bug. It's closer to the intended design. What's worth examining is what actually happens inside that window — and whether the time is being spent on the right things.

The Clock Starts Later Than You Think

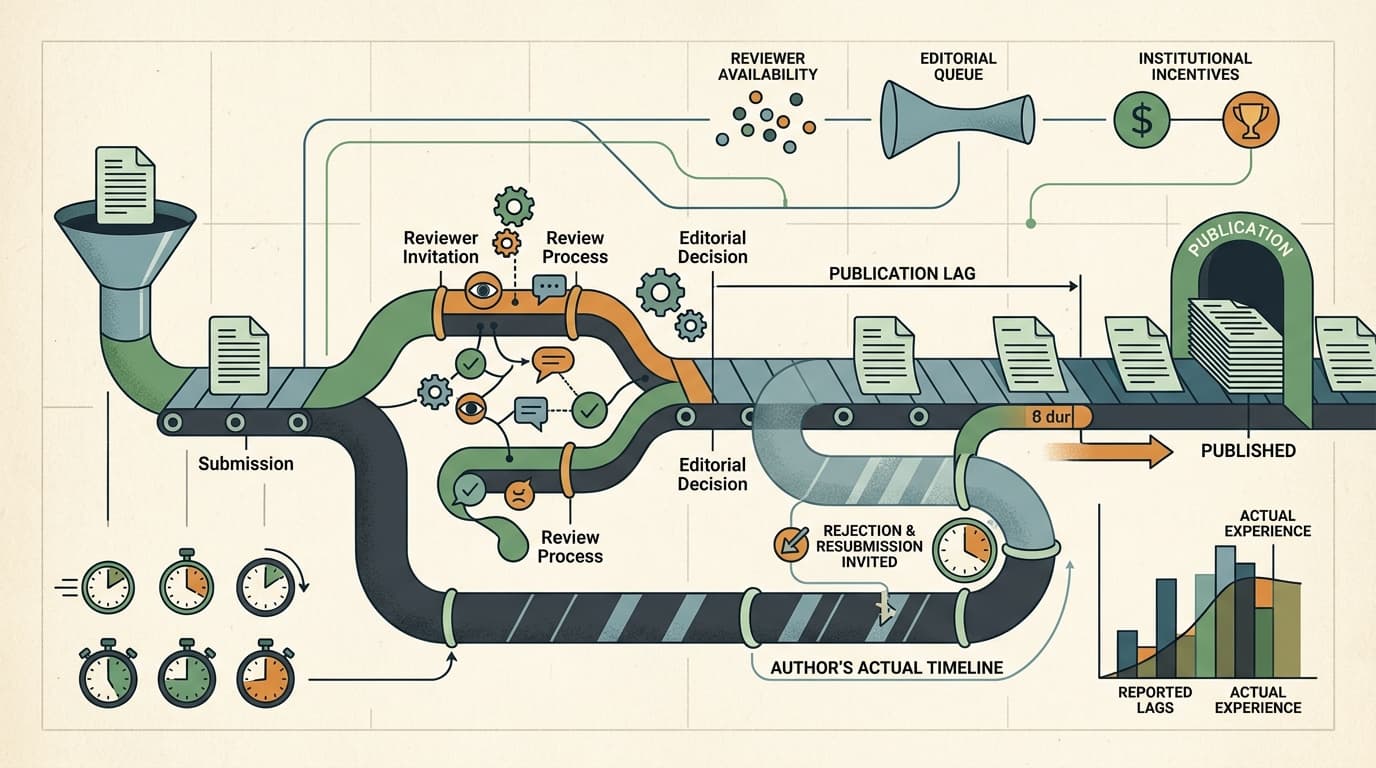

A detailed analysis of cell biology journal lag times published earlier this month by researcher Stephen Royle, using PubMed submission and publication records, illustrates something that gets lost in the standard "peer review is slow" complaint: the timeline varies enormously by journal, and the variation isn't random. Some journals appear to "restart the clock" by rejecting a paper and inviting resubmission — a practice that makes their official lag times look shorter than the author's actual experience. The data is only as clean as what journals report to PubMed, and some journals report selectively.

This matters because when we talk about how long good science takes, we're often measuring the wrong thing. Publication lag is a proxy. What it actually captures is a mixture of genuine scientific scrutiny, editorial queue management, reviewer availability, and institutional incentives that have nothing to do with the quality of the work.

Where the Time Goes at High-Prestige Journals

At Nature specifically, the pipeline breaks down into stages that reveal where the real bottlenecks sit. The journal desk-rejects roughly 90–95% of submissions, usually within 2–6 weeks. Papers that survive that cut spend another 2–4 weeks in reviewer recruitment before external peer review even begins. The review period itself runs 4–8 weeks. First decision after review: 3–6 months from submission. Then revision cycles add several more months on top.

The practical implication is that the majority of the timeline isn't peer review — it's everything around peer review. Recruitment delays, editorial queue management, author revision time. A paper can spend more time waiting for a reviewer to agree to participate than it spends actually being reviewed.

That's not an argument that peer review is fast. It's an argument that "peer review is slow" is an imprecise diagnosis. The bottleneck is distributed across a system that wasn't designed for the volume of papers it now processes.

The Replication Problem Sits Underneath All of This

Here's the uncomfortable part. All that time — the months of review, the revision cycles, the editorial deliberation — doesn't reliably produce findings that hold up. Work recently published in Nature found that roughly half of social science research findings could be replicated when checked against three criteria: replicability, reproducibility, and robustness. Half.

That number should reframe the timeline question entirely. The issue isn't just that peer review takes too long. It's that the time being spent isn't consistently catching the problems that matter most. Reviewers evaluate whether a paper is plausible and well-written. They rarely have the time, data access, or incentive to check whether the results would survive an independent replication attempt.

The Pressure to Accelerate Is Making This Worse

There's a structural tension that doesn't get enough attention: researchers are evaluated on publication counts and prestige, not on whether their findings replicate. Short contracts, grant cycles, and hiring committees that count papers create pressure to publish faster and in higher-profile venues. The journals with the longest timelines are also the ones with the most career value attached to them — which means researchers are simultaneously incentivized to chase slow journals and to produce results quickly enough to survive the next review cycle.

Preprints were supposed to relieve some of this pressure by separating "sharing science" from "getting credit for science." Royle's analysis notes exactly this: preprints circumvent publication delays, but publication remains the currency for evaluation. The pressure hasn't shifted; it's just added a new layer.

A Forbes piece from April 13 flagged an AI system that automated the full arc of scientific research and passed peer review — which is either a solution to the throughput problem or a demonstration that peer review's quality floor is lower than advertised, depending on your level of optimism.

Bottom Line: Good science probably does take 8–14 months to publish, and that timeline is defensible. What's harder to defend is a system where that time doesn't reliably filter out findings that won't replicate. The question worth asking isn't how to make peer review faster — it's what the time is actually being used to verify, and whether that matches what we need it to catch.