The word does a lot of work it hasn't earned.

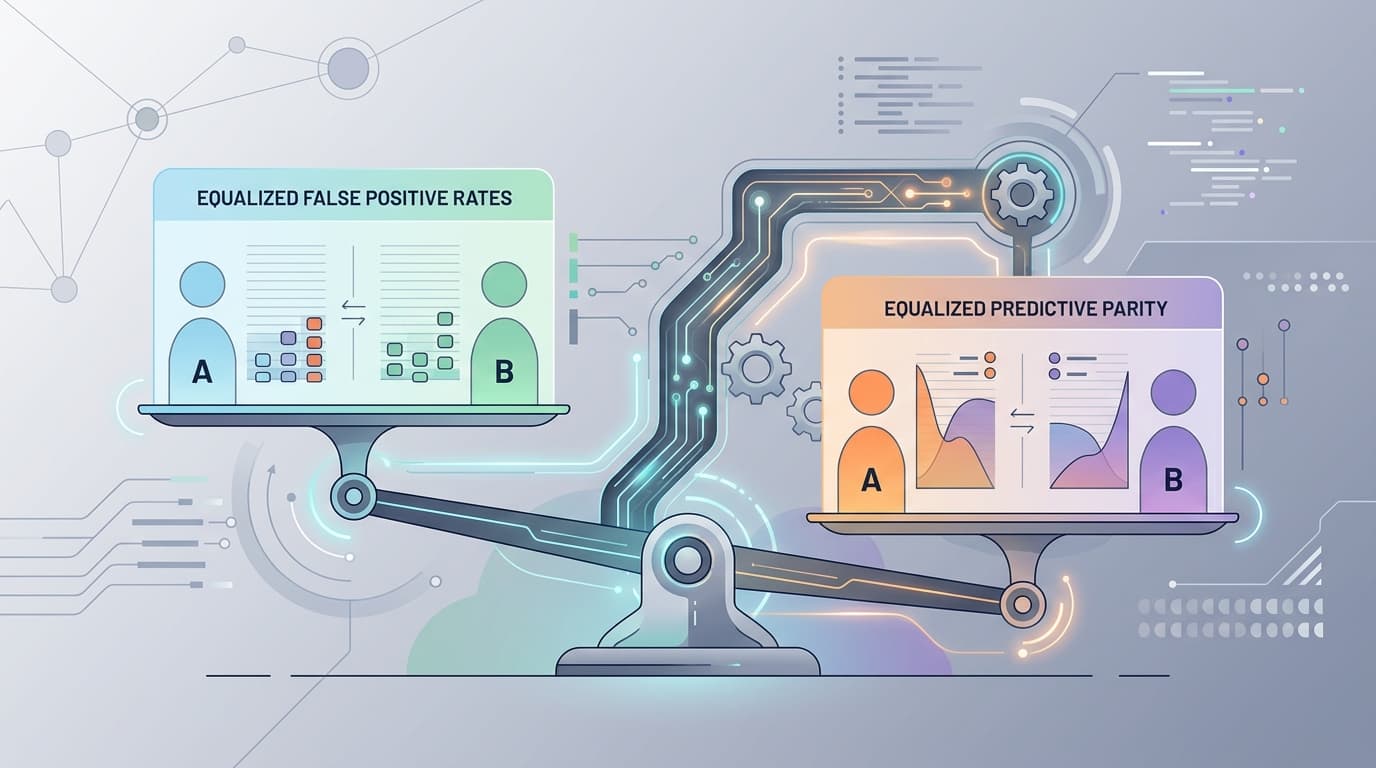

When researchers publish an AI bias study and report that their model achieves "fairness," they almost always mean one specific, narrow thing: that some chosen metric is equalized across demographic groups. Maybe false positive rates. Maybe selection rates. Maybe predictive parity. The choice of metric is a methodological decision, and it's almost never explained in the headline — or the press release.

The problem isn't that researchers are being dishonest. It's that these metrics are genuinely incompatible with each other. Equalizing false positive rates across groups mathematically prevents you from simultaneously equalizing predictive parity, under most real-world conditions. You can satisfy one definition of fairness or another. Not both. This has been established in the technical literature for years, and it still gets buried in the methods section while the abstract announces the model is "fair."

The Metric You Pick Is the Argument You're Making

A recent preprint on peer review recommendation systems illustrates the pattern cleanly. The researchers apply "fairness regularization" to reduce bias in how papers get matched to reviewers — a genuine problem worth solving. Their fairness intervention works, by their chosen measure. But the paper is also candid about something most aren't: the fairness parameters behave differently depending on which regime you're in, and the gains are most visible in "highly biased" conditions. In less biased settings, the improvement is modest.

That's honest reporting. It's also a reminder that what a fairness metric detects depends heavily on where you point it. A model can score well on the metric you measured and still encode the bias you didn't.

What "Recourse" Reveals That Accuracy Hides

The sharper critique comes from a different direction. A preprint on algorithmic systems in healthcare and administrative contexts asks a question most fairness studies skip entirely: even if the model is "fair" by some metric, can the people it affects actually do anything about a wrong decision?

Their answer, derived from federal datasets and algorithmic audit studies, is almost comically grim. Under baseline conditions, the end-to-end probability that someone successfully challenges an automated decision — what the authors call "recourse" — is 0.0018%. Removing any single barrier to recourse improves that figure by less than 0.02%.

That number deserves to sit for a moment. Fairness metrics measure whether the model treats groups similarly. They say almost nothing about whether the system is accountable to the individuals it affects. A model can be "fair" in the technical sense while being practically unreviewable by the humans on the receiving end.

The Subnetwork Problem

Meanwhile, a separate line of research is probing whether bias can be surgically removed from a model trained on biased data. A recent arXiv paper on finding unbiased subnetworks explores whether you can extract a less-biased sub-model from a biased one — without retraining from scratch. The approach is technically interesting. But the researchers are careful to note the obvious catch: if you fine-tune that subnetwork on the same biased training set, you're working against yourself.

I'd argue this is the methodological trap in miniature. The intervention is real, but the data environment it operates in hasn't changed. Fairness techniques applied downstream of a biased data pipeline are doing cleanup work, not structural repair.

Bottom Line: The next time a study reports that an AI system is "fair," the first question isn't whether to believe it. It's: fair by which metric, measured how, and what happens to the people the model gets wrong? Those questions don't appear in most abstracts. They should be the lede.