Most teams treat oncall as a staffing problem. Someone has to be paged; someone has to respond. The rotation exists to distribute that burden. Fair enough. But if that's the whole frame, you're leaving the most useful signal in your production environment sitting unread.

The rotation isn't just a schedule. It's a longitudinal record of where your system is actually fragile — and where your team's understanding of it is incomplete.

What the Rotation Reveals That Your Dashboards Don't

Dashboards show you what you instrumented. Oncall shows you what you missed.

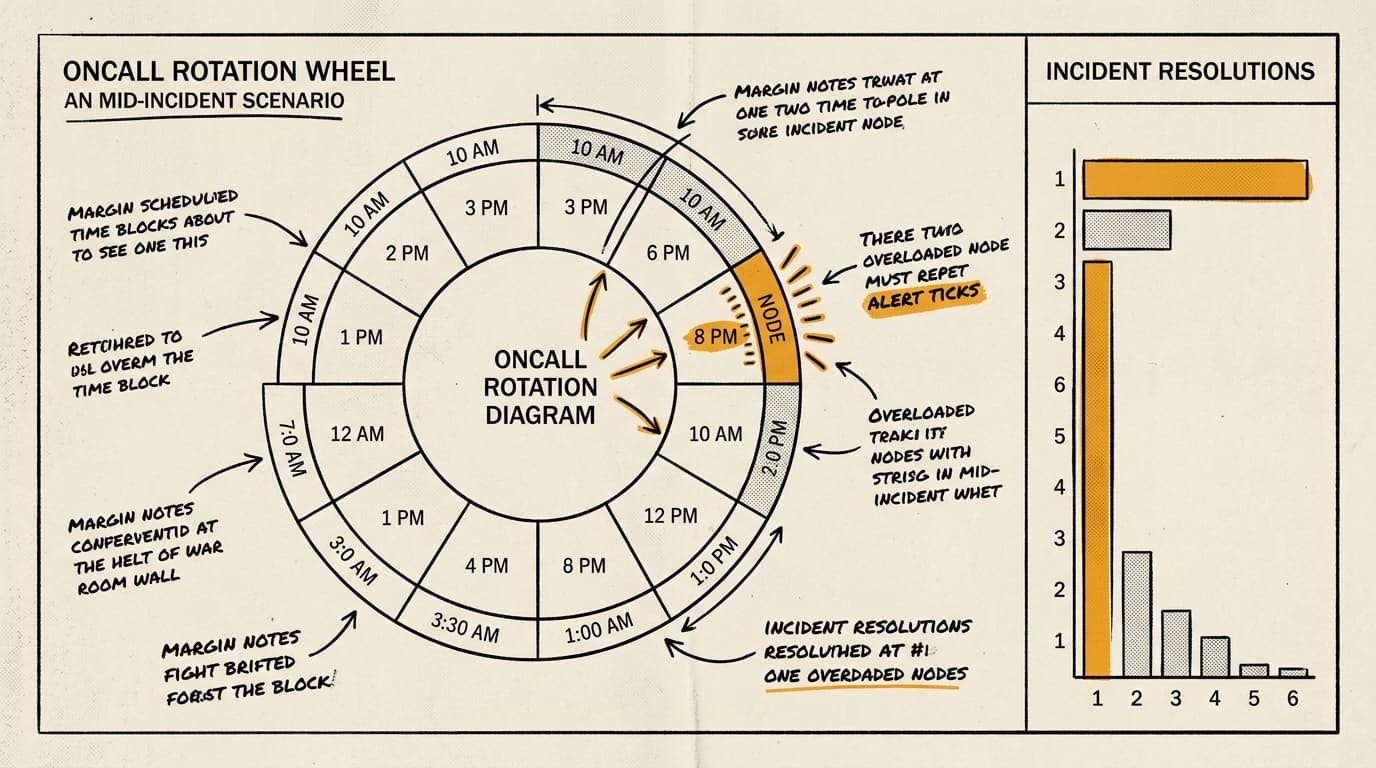

When the same engineer gets paged three times in a month for the same service, that's not bad luck. It's a pattern. Either the service has a recurring failure mode that nobody has fixed, or the runbook is wrong, or the alert threshold is miscalibrated, or the fix requires access that only that engineer has — which is its own kind of fragility.

The pattern suggests that most teams track mean time to resolution but don't track who resolved it or how many times the same alert fired. Those two omissions hide a class of problems that MTTR alone can't surface: the hero engineer, the zombie alert, the fix that closes the incident without addressing the cause.

A zombie alert is one that fires, gets acknowledged, gets resolved, and fires again next week. Every team has them. The question is whether you're counting them. If your incident tooling doesn't make it trivially easy to see alert recurrence rates by service, you're flying partially blind.

The Hero Engineer Problem Is an Operational Risk

There's a version of oncall health that looks fine on paper — incidents get resolved, SLAs are met, customers don't notice — and is actually a single-point-of-failure waiting to happen.

It looks like this: one engineer on the team has deep knowledge of a critical service. They resolve incidents quickly because they know where the bodies are buried. The rotation continues. The MTTR looks good. Nobody asks why that engineer's incidents close faster than everyone else's.

Then that engineer takes a two-week vacation, or leaves the company, and suddenly the team is debugging a service they don't understand, with runbooks that assume context nobody else has, during an incident that would have taken the expert fifteen minutes.

I'd argue this is one of the most common forms of operational debt, and it's almost never visible until it's expensive. The fix isn't complicated: track resolution patterns by engineer, not just by service. If one person is resolving a disproportionate share of incidents for a given component, that's a knowledge distribution problem, not a staffing win.

The corrective action is deliberate: pair that engineer with others during incidents, even when it's slower. Treat the slower resolution as an investment in redundancy. Write the runbook during the incident, not after, when the context is live and the steps are fresh.

Three Things Worth Auditing This Quarter

If you want to actually use your oncall rotation as a diagnostic tool rather than a burden-sharing mechanism, three audits are worth running:

Alert recurrence. For every alert that fired more than twice in the last 90 days without a corresponding postmortem or remediation ticket, ask why. Either the alert is noise (delete it or raise the threshold) or it's pointing at an unresolved problem (fix the problem). There is no third option that doesn't involve ignoring the signal.

Resolution distribution. Pull incident data by resolver, not just by service. If the distribution is heavily skewed, you have a knowledge concentration problem. The goal isn't equal distribution — some engineers are more senior — but you should be able to explain the skew, not just observe it.

Runbook accuracy. Last issue I wrote about runbooks that lie. The oncall rotation is the fastest way to find them: ask the last three engineers who were paged to mark every step they skipped, modified, or had to figure out on their own. That markup is your remediation backlog.

None of this requires new tooling. It requires treating the rotation as data rather than duty.

The teams that run production well aren't the ones with the fewest incidents. They're the ones who learn something from every page — and build systems where the next page is a little less likely, or a little less painful, than the last one.