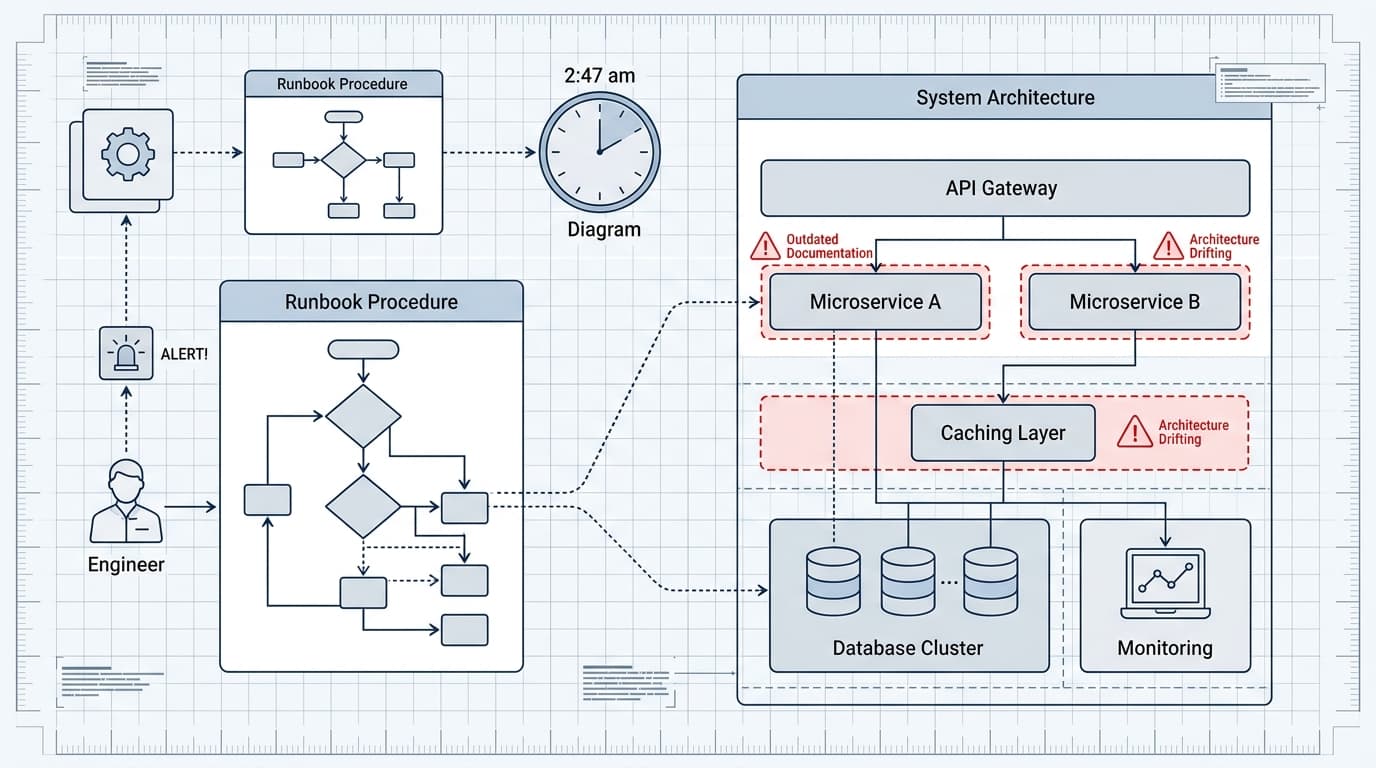

It's 2:47am. The alert fires. You find the runbook, follow it step by step, and the system gets worse. Somewhere between the last incident and this one, the architecture changed — a cache layer got added, a service got renamed, a threshold got tuned — and nobody updated the docs. You're now debugging a system that doesn't match its own documentation, under pressure, half-asleep.

This is not a rare failure mode. It's arguably the dominant failure mode in operational documentation, and most teams treat it as a documentation problem when it's actually a systems problem.

Runbooks Rot Because Nobody Owns the Decay

The standard advice is "keep your runbooks up to date." That's about as useful as "write better code." The real question is: what organizational mechanism actually causes runbooks to stay current?

Most teams have no such mechanism. Runbooks get written during or after an incident, when the pain is fresh and the motivation is high. They get used during the next incident, often months later, by someone who wasn't there for the first one. In between, the system changes — incrementally, invisibly — and the runbook doesn't.

The decay pattern is predictable. A runbook starts accurate. Engineers use it, trust it, stop questioning it. Then a migration happens and the service names change. Then a new engineer joins and adds a workaround without updating the canonical steps. Then someone removes a dependency and forgets to pull the corresponding runbook section. By the time the next serious incident hits, the runbook is a historical artifact dressed up as current procedure.

The failure isn't laziness. It's that runbook maintenance has no natural trigger. Code changes have CI. Infrastructure changes have Terraform diffs. Runbooks have nothing — they sit in a wiki or a doc folder, accumulating drift in silence.

The Fix Is Coupling Documentation to Change, Not to Incidents

If runbook maintenance only happens after incidents, you're always behind. The teams that maintain useful operational documentation tend to do something structurally different: they treat runbook updates as part of the change process, not the incident process.

Concretely, this means a few things. First, deployment checklists include a documentation review step — not "did you write docs?" but "do the existing runbooks for this service still reflect reality?" Second, postmortems explicitly audit the runbooks that were consulted during the incident, noting where they helped, where they were wrong, and where they were missing. Third, runbooks have owners — not a team, but a named person — who are responsible for keeping them current when their services change.

That last point is uncomfortable because it creates accountability. But "the team owns it" is operationally equivalent to "nobody owns it." Diffuse ownership is how you get a runbook that was last updated eighteen months ago and still references a database that was decommissioned.

The deeper principle here is that documentation accuracy is a reliability property, not a nice-to-have. A runbook that gives you wrong instructions during an incident doesn't just fail to help — it actively costs you time and confidence at the worst possible moment. I'd argue that a wrong runbook is more dangerous than no runbook, because no runbook forces you to reason from first principles, while a wrong one sends you confidently in the wrong direction.

What Good Runbook Hygiene Actually Looks Like

A few patterns that hold up under pressure:

Date-stamp and version your runbooks. Not just "last edited by," but a visible "verified against production as of [date]" field. When that date is six months old, it's a signal, not a guarantee of staleness — but it prompts the right question.

Write runbooks for the failure, not the system. A runbook titled "Redis" is a reference document. A runbook titled "Redis replication lag causing checkout timeouts" is an operational tool. The failure-oriented framing forces specificity about symptoms, blast radius, and recovery steps — and it makes drift more visible, because the failure mode either still exists or it doesn't.

Test your runbooks in non-incident conditions. Game days and chaos engineering exercises are partly about system resilience, but they're also the best way to discover that your runbooks don't match reality before 2:47am. If you can't run a game day, at minimum have engineers walk through runbooks during onboarding — new eyes catch stale assumptions that veterans have stopped noticing.

Track runbook usage. If a runbook hasn't been touched or consulted in two years, it's either perfectly written or completely irrelevant. Either way, it deserves a review. Usage data — even just a simple "was this helpful?" prompt at the bottom — creates a feedback loop that passive documentation never has.

Short Takes

On-call handoffs are where context goes to die. The shift handoff is one of the most underengineered parts of incident management. Most teams do it informally or not at all, which means the incoming engineer inherits a system state they don't fully understand. A structured handoff template — current alerts, recent deployments, anything that's "warm" but not yet paging — is low-effort and high-value. The teams that do this well treat it as a five-minute standup, not a document.

The postmortem that never gets written. There's a class of incidents that are "too small" to warrant a formal postmortem but "too significant" to just close the ticket. Teams tend to handle these inconsistently, which means the operational learning evaporates. A lightweight format — three fields: what happened, what we changed, what we'd do differently — captures the signal without the ceremony. The goal isn't process compliance; it's making sure the next engineer who hits the same thing has something to find.

Toil is a measurement problem before it's a workload problem. Most SRE teams know they have too much toil. Fewer have a clear picture of where it's concentrated. Before you can reduce toil, you need to track it — by service, by type, by engineer. Even rough categorization (manual intervention, repetitive alert response, deployment friction) reveals patterns that gut feel misses. The measurement doesn't have to be perfect to be useful.

The runbook problem is a proxy for a broader question about how teams maintain operational knowledge over time. Systems change faster than documentation does, and the gap between them is where incidents get worse. Closing that gap isn't about writing more — it's about building the triggers, ownership, and feedback loops that make accuracy a property the system maintains, not a goal individuals aspire to.