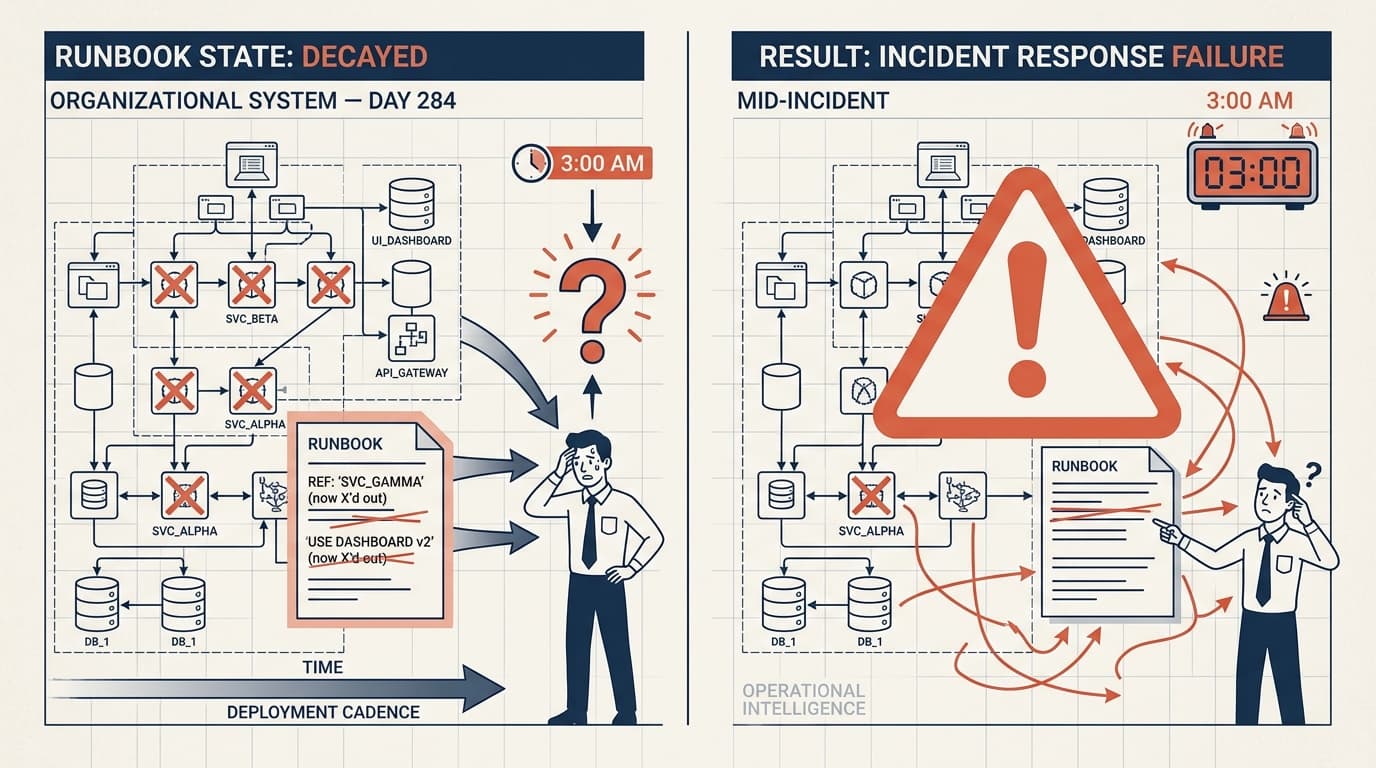

There's a particular kind of dread that hits when you're mid-incident, you've pulled up the runbook, and the first step references a service that was deprecated eight months ago. The second step assumes you have access to a dashboard that no longer exists. By step three, you've stopped reading and started guessing.

This isn't a documentation problem. It's a maintenance incentive problem — and it's one of the more honest tests of whether an organization actually practices operational excellence or just talks about it.

Runbooks Decay at the Speed of Your Deployment Cadence

The math here is brutal. Every deployment that changes a service's behavior, every infrastructure migration, every renamed metric — each one silently invalidates some portion of your incident response documentation. Teams that ship frequently can render a runbook obsolete within weeks of writing it. And because runbooks are only consulted under pressure, the decay goes unnoticed until exactly the wrong moment.

The pattern I see most often: runbooks get written during or immediately after a painful incident, when the knowledge is fresh and the motivation is high. Then they sit. Nobody has a strong incentive to update them during normal operations because the cost of outdated documentation is invisible until an incident surfaces it. By then, the engineer who wrote it may have moved teams, the system may have been refactored, and the person staring at the screen at 2am is working from a document that describes a system that no longer exists.

This is what makes runbook rot a reliability risk, not just a documentation annoyance. Stale runbooks extend incident duration. They introduce uncertainty at the moment when certainty is most valuable. And they erode trust in the documentation system itself — once engineers learn that runbooks can't be trusted, they stop consulting them, which means the good ones get ignored along with the bad ones.

The Fix Isn't More Discipline, It's Better Triggers

The standard advice is "update your runbooks regularly." This is correct and almost completely useless, because it treats documentation maintenance as a matter of willpower rather than system design.

The teams that actually maintain useful runbooks tend to do it through triggers, not schedules. A few patterns that work in practice:

Tie runbook review to deployment gates. If a service change touches anything a runbook references — a metric name, a service dependency, a configuration parameter — the PR process flags the relevant runbook for review. This doesn't require the author to update it, just to confirm it's still accurate or mark it for revision.

Make staleness visible during incidents. When an engineer consults a runbook mid-incident and finds a step that's wrong, the path to flagging it should be one click, not a Jira ticket they'll forget to file. Some teams add a simple "this step is wrong" button directly in their incident tooling. The friction of reporting decay has to be lower than the friction of ignoring it.

Treat runbook review as part of the postmortem. Not "did we follow the runbook" but "did the runbook reflect reality, and if not, when did it stop?" This surfaces decay systematically rather than waiting for the next incident to expose it.

The underlying principle: documentation maintenance has to be embedded in workflows that already happen, not added as a separate practice that competes with shipping.

What Good Looks Like Under Pressure

A runbook that works at 3am has a few properties that have nothing to do with completeness. It's short enough to scan, not read. It distinguishes between "do this first" and "consider this if the first thing doesn't work." It tells you what success looks like at each step, so you know whether to continue or escalate. And it was last touched recently enough that you can trust it.

That last part is the hardest to achieve and the most important. An engineer under pressure will make a fast judgment about whether a document is trustworthy based on signals like last-modified date, whether the author is still on the team, and whether the first step matches what they see on their screen. If those signals suggest the document is stale, they'll abandon it and improvise — which may be the right call, but shouldn't be forced by documentation failure.

The teams I'd trust with a production system aren't the ones with the most comprehensive runbooks. They're the ones whose runbooks have been edited in the last 30 days and show evidence of real incidents — notes in the margin, steps that were added after something went wrong, explicit callouts of the failure modes that actually happen versus the ones that seemed likely when the document was first written.

That's the difference between documentation as artifact and documentation as living operational knowledge. One gets you through the audit. The other gets you through the incident.

Worth watching: If your team is evaluating runbook tooling, the more useful question isn't which platform has the best editor — it's which one makes it easiest to see what hasn't been touched since your last major infrastructure change. Staleness visibility is the feature that matters.