Three hundred alerts in a week. Forty of them actionable. The other two hundred and sixty? Your team learned to ignore those months ago — they just haven't gotten around to deleting them. This is alert fatigue in its natural habitat: not a dramatic failure, but a slow erosion of signal until the monitoring system becomes background noise, and the first real incident in six months gets lost in the static.

Alert fatigue is one of those problems that's easy to diagnose in other teams and nearly invisible in your own. You don't decide to stop trusting your alerts. You just start glancing at them instead of reading them. Then you start closing them without investigating. Then someone writes an unspoken rule into team culture: "that one fires all the time, don't worry about it." The runbook entry never gets updated. The threshold never gets tuned. The alert just... persists, like a smoke detector with a dying battery that everyone's learned to sleep through.

Noise Is a Debt That Compounds

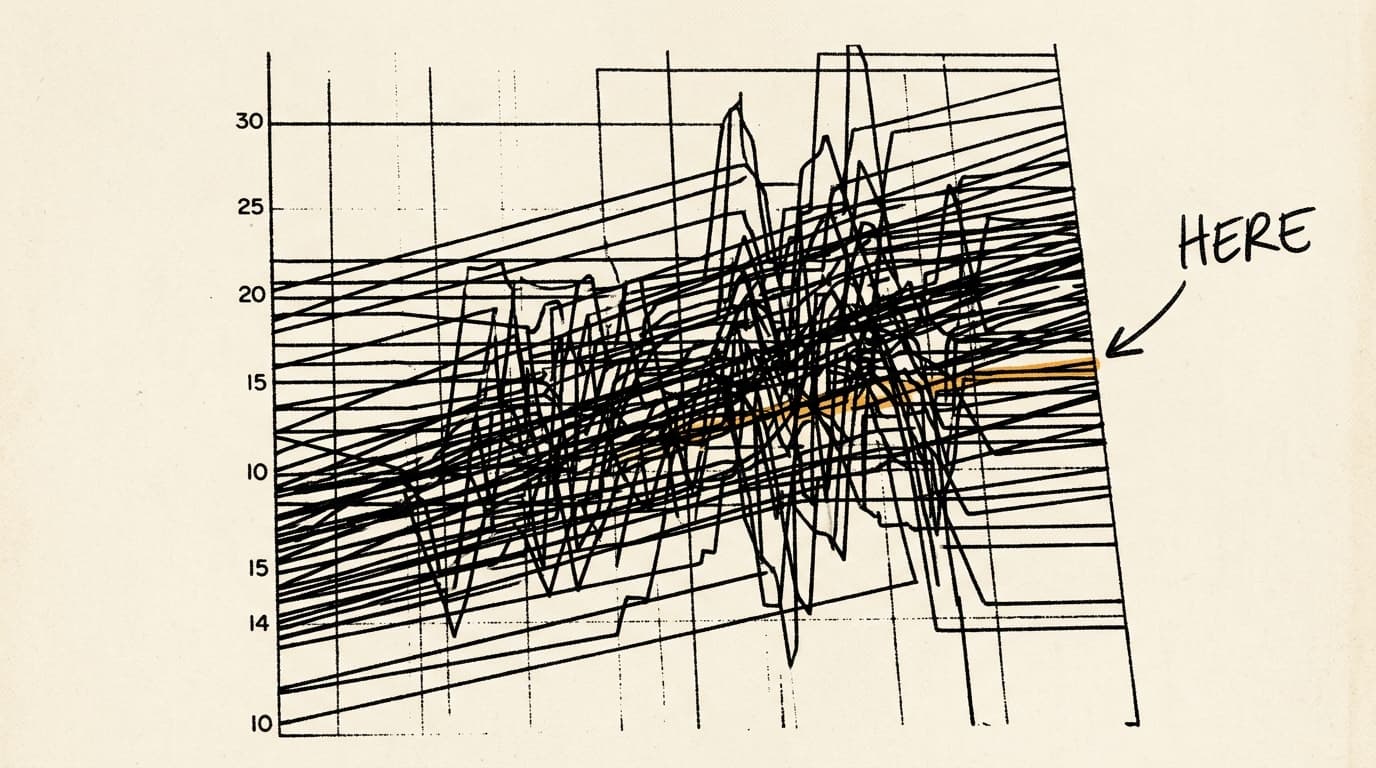

The insidious thing about alert noise isn't the volume — it's what it does to your team's mental model of the system. Every false positive is a small withdrawal from a trust account. After enough withdrawals, engineers stop forming hypotheses when an alert fires. They start pattern-matching to "probably nothing" instead. That cognitive shortcut is rational given their experience, and it will eventually be catastrophically wrong.

I'd argue this is the most underappreciated failure mode in incident management. Teams spend enormous energy on detection — better instrumentation, more coverage, tighter SLOs — and almost no energy on the quality of what those systems actually surface. You can have perfect observability and still miss an incident if the signal is buried under a hundred alerts that have cried wolf since Q3 of last year.

The operational debt here compounds in a specific way: noisy alerts are hard to delete because deleting them feels risky. What if this one time it's real? So they accumulate. The alert inventory grows. The ratio of signal to noise degrades. And the team's effective detection capability quietly collapses even as the monitoring dashboard looks increasingly comprehensive.

Tuning Is Maintenance, Not a Project

The fix isn't a one-time alert audit, though that's usually where teams start. The fix is treating alert quality as ongoing maintenance — the same way you treat dependency updates or capacity planning. Alerts go stale. Systems change. Thresholds that made sense at a certain traffic level become meaningless after growth. An alert that was meaningful during a migration period may have no operational relevance six months later.

A useful forcing function: require every alert to have an owner and a review date. Not a team — a person. Diffuse ownership is how you end up with two hundred and sixty alerts nobody trusts. When one person is accountable for whether an alert is worth firing, the calculus changes. They have to answer for the noise it generates. They have to keep the runbook current. They have to make the call when the alert's useful life is over.

The other practice worth building into your process: every alert that fires during an incident gets reviewed afterward. Not just the alerts that helped — the ones that fired and turned out to be irrelevant. Those are the candidates for tuning or deletion. Postmortems that only examine what went wrong miss the opportunity to clean up the monitoring system that was supposed to catch it.

What Good Looks Like Under Pressure

A well-maintained alert inventory has a specific feel during an incident. Alerts fire, and engineers immediately have a working theory. The signal is narrow enough that it points somewhere. The runbook is current enough that it's actually consulted. The team trusts what they're seeing, which means they can move fast instead of spending the first twenty minutes of an incident arguing about whether the alert is real.

That trust is built in the quiet periods — the weeks when nothing is on fire and someone is methodically reviewing alert history, deleting the ones that haven't been actionable in six months, tightening the thresholds on the ones that fire too broadly. It's unglamorous work. It doesn't show up in incident metrics until the day it matters, and then it shows up everywhere.

The teams I've seen handle incidents well aren't the ones with the most sophisticated monitoring stacks. They're the ones who've done the boring work of keeping their signal clean. When the real alert fires at 3am, they know it's real. That confidence — earned through maintenance, not tooling — is what separates a fifteen-minute incident from a three-hour one.

Build the habit before you need it. Your future on-call self will be too busy to thank you, but they'll notice.