After the 2001 anthrax attacks, Americans flooded emergency rooms with anxiety about bioterrorism. Billions in federal funding shifted toward biodefense. Meanwhile, the flu killed tens of thousands that same year, quietly, as it does every year, and most people didn't change a single habit.

That's not irrationality in the clinical sense. That's the availability heuristic doing exactly what it was built to do — and doing it badly.

The Mechanism Is Simple. The Damage Isn't.

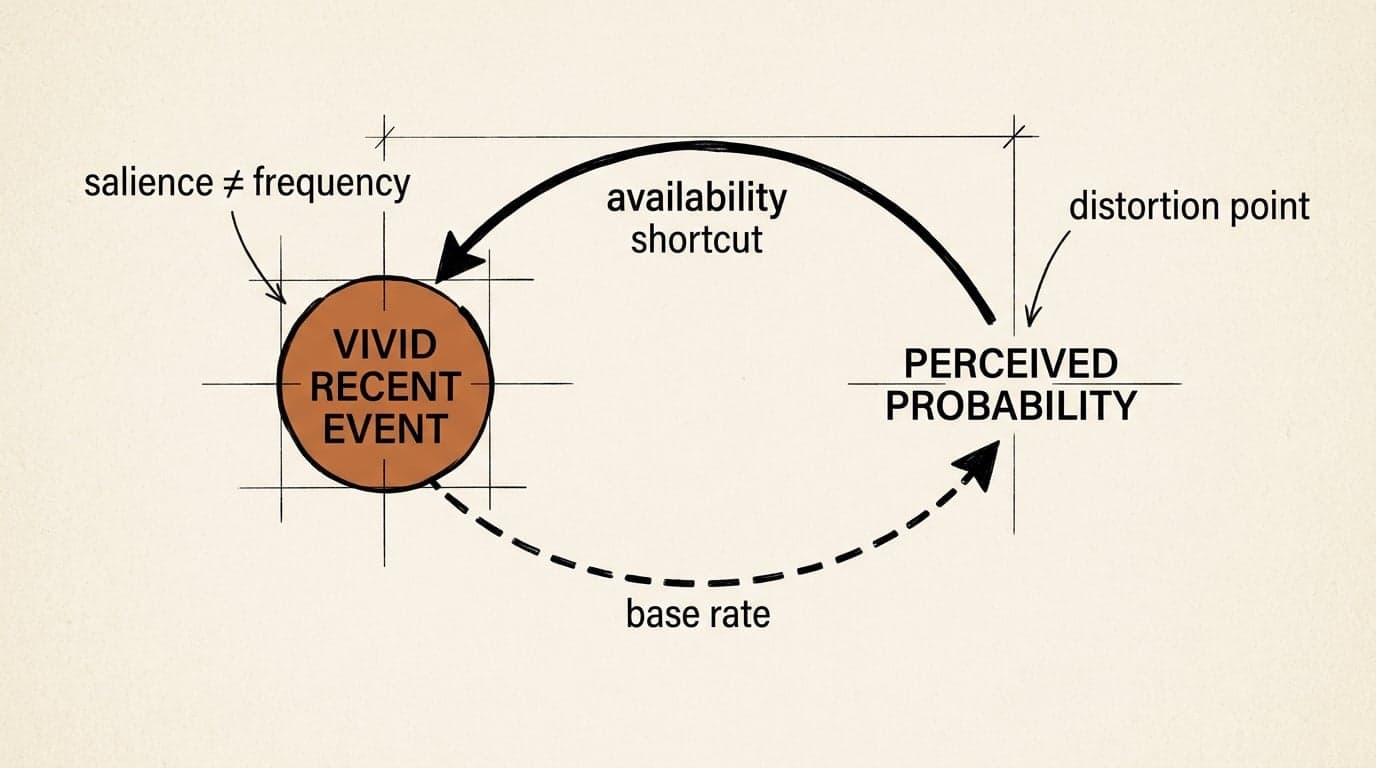

The availability heuristic, first described by Kahneman and Tversky, is the mental shortcut where we estimate the probability of something based on how easily examples come to mind. Vivid, recent, emotionally charged events feel more likely. Slow, statistical, undramatic risks feel remote — even when they're far more probable.

As University of Chicago's behavioral economics explainer notes, this is part of a broader pattern: people are not purely rational actors who weigh probabilities cleanly. We're emotional, context-sensitive, and heavily influenced by what's salient in our environment. The availability heuristic is one of the clearest examples of that gap between what we should do and what we actually do.

The problem isn't that the heuristic is useless. In a world where you had to consciously calculate every risk from scratch, you'd never leave the house. The problem is that availability is a proxy for probability — and it's a proxy that breaks down systematically whenever the information environment is distorted. Which, in the modern world, is basically always.

Where It Breaks Down in Practice

The distortion shows up most clearly in risk management contexts. A Risk Management Magazine analysis of cognitive biases in enterprise risk management identifies how biases corrupt the process at every stage — from risk identification to monitoring to reporting. The availability heuristic isn't listed by name, but its fingerprints are everywhere: organizations over-index on the last crisis they survived and systematically underweight risks that haven't materialized yet.

The pattern is consistent across domains. After a data breach makes headlines, security budgets spike toward the specific attack vector that was publicized — even when that vector is now well-defended and a different one is more exposed. After a high-profile project failure, teams add process controls for the exact failure mode that just happened, not the next one. The last disaster becomes the template for the next risk assessment.

This is availability doing what it does: making the recent and vivid feel like the probable and important.

Investopedia's breakdown of recency bias captures the financial version precisely — investors overemphasize recent market moves and underweight long-term base rates, selling into bear markets because recent losses feel like evidence of future losses. The mechanism is the same: the emotionally charged recent event crowds out the statistical reality.

The Failure Case That Actually Stings

Here's where I'd argue the model gets genuinely dangerous: when smart people use it deliberately.

Risk communicators, marketers, and policymakers all know that making something vivid makes it feel probable. That's not a bug they're exploiting — it's often the explicit strategy. You want people to wear seatbelts? Show them a crash. You want them to fear the wrong competitor? Make that competitor's wins feel recent and inevitable.

The result is that our collective risk perception gets shaped less by actual probability distributions and more by whoever has the most compelling recent story. Plane crashes get wall-to-wall coverage; car accidents don't. Rare violent crimes drive policy; chronic, statistical harms don't. The availability heuristic doesn't just distort individual decisions — it distorts the information environment that feeds everyone's heuristics simultaneously.

And here's the uncomfortable part: knowing about the bias doesn't reliably fix it. Research in behavioral economics consistently shows that awareness of a cognitive bias is a weak corrective. You can know, intellectually, that plane travel is safer than driving, and still feel more anxious boarding a flight than merging onto a highway. The emotional salience runs underneath the rational knowledge.

How to Use This Without Getting Burned

The practical move isn't to distrust your intuitions about risk entirely — that way lies paralysis. It's to build a specific habit: when you notice a risk feeling urgent or vivid, ask what made it feel that way right now. Is it recent news coverage? A conversation with someone who just experienced it? A near-miss that's still fresh?

If the answer is yes, that's your signal to go find the base rate. Not to replace your gut feeling, but to put it in conversation with the statistical reality. The goal is a calibrated estimate, not a purely cold one.

The availability heuristic is a fast, cheap, usually-good-enough tool for navigating a world where you can't calculate everything. It just happens to fail exactly when the stakes are highest — when the information environment is loudest, most emotional, and most distorted.

Which is to say: when it matters most, trust it least.

Try This: Pick one risk you've been thinking about lately — professional, financial, personal. Write down what made it feel salient this week. Then spend five minutes looking for the base rate. Notice the gap between what you felt and what the numbers say. You don't have to resolve it. Just see it.