Lesson 7: Declarative Thinking and the Logic Programming Model

Here's a question that reframes how you think about code: what if you never told the computer how to find the answer — only what the answer looks like?

That's not a thought experiment. It's how Prolog works, and it's been working that way since Alain Colmerauer and his team built it in Marseille in the early 1970s. The original motivation was natural language processing — a domain where the relationships between things matter far more than the sequence of steps you take to find them. That origin shaped everything about the language's design, and the lessons it embedded are worth understanding even if you never write a single Prolog clause.

The Problem Prolog Was Built to Solve

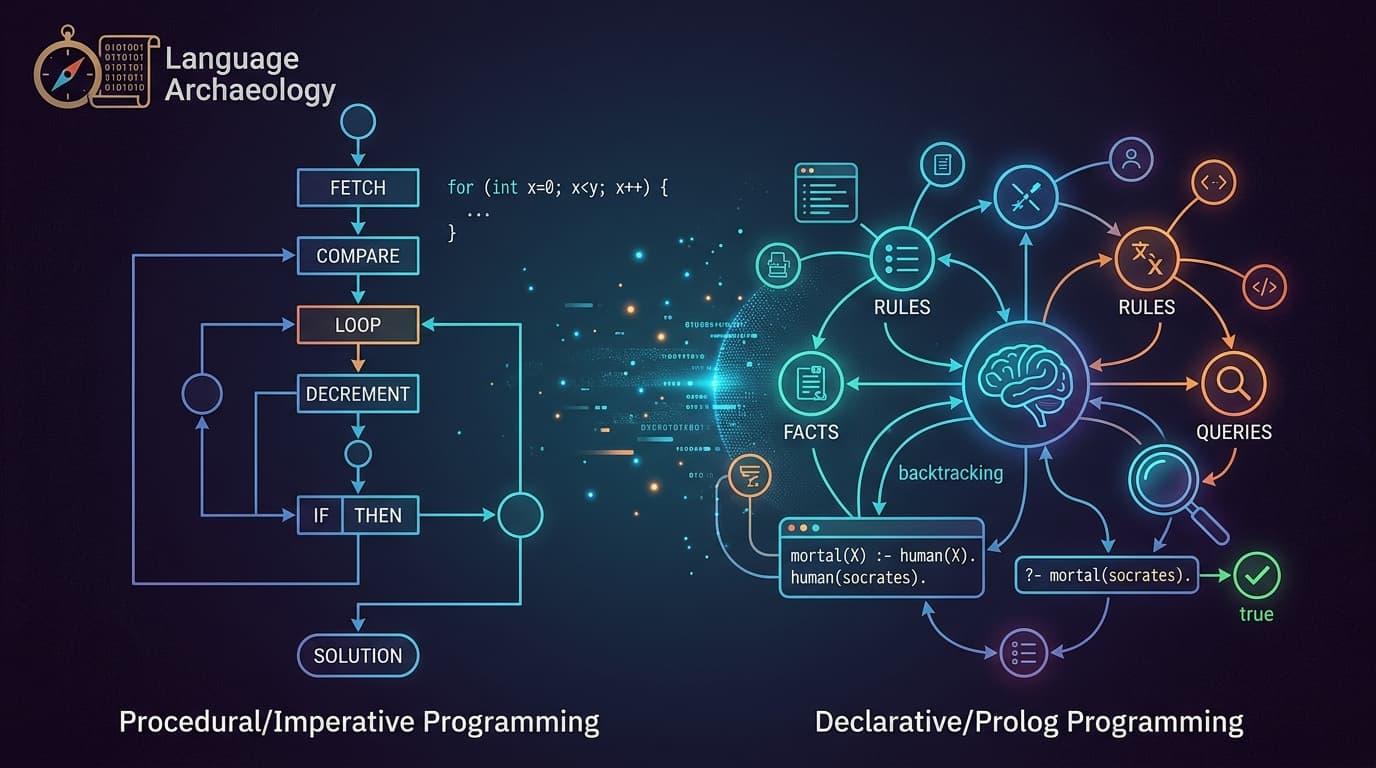

Most languages you use daily are imperative at heart. You write a procedure: fetch this, compare that, loop until done. The computer follows your instructions in order. You are, in effect, the algorithm.

Prolog inverts this. You declare facts and rules — the structure of your problem domain — and then ask questions. The engine figures out how to answer them.

The classic illustration is almost embarrassingly simple:

mortal(X) :- human(X).

human(socrates).

?- mortal(socrates).

true.

You didn't write a search loop. You didn't write a conditional. You stated two things: humans are mortal, and Socrates is human. The query mortal(socrates) succeeds because the engine can derive it from what you've told it. This is what Scholar's Hub describes as Prolog's core shift — from "how" to "what."

The engine's mechanism for doing this is backtracking: it systematically explores possible solutions, backing up and trying alternatives when a path fails. You don't manage that search. You just describe the rules of the space.

Why Backtracking Is the Real Lesson

Backtracking sounds like a technical detail, but it's actually a design philosophy. It says: the programmer's job is to define valid states and relationships, not to enumerate search strategies.

This matters most when the problem space is relational — when you're asking questions like "what connects to what?" or "what satisfies these constraints simultaneously?" Natural language parsing, database queries, type inference, configuration solving — these are all fundamentally about relationships, not procedures.

Developer Paul Tarvydas describes exactly this in a practical context: when building a diagram compiler, he used Prolog specifically for the inferencing step — determining which shapes were ports, which were parts, and how they connected. His observation cuts to the core of it: "Prolog notation is much, much better for dealing with inferencing than anything else I've found, e.g. loops within loops, recursive functions." For that specific class of problem, writing the loops yourself is just noise. The relationships are the program.

The flip side is equally instructive. Tarvydas switched to JavaScript the moment he needed to manipulate strings and write output files. Prolog is expressive for relational reasoning and genuinely awkward for everything else. That's not a flaw — it's a signal about what the language was designed to do.

What Prolog's Descendants Reveal

Prolog's influence didn't stay inside Prolog. Its core idea — describe relationships, let the engine search — propagated into other tools.

Datalog, a restricted subset of Prolog, trades Prolog's full expressiveness for a complete search strategy: it always terminates, and its evaluation order doesn't affect results. That purity made it practical for program analysis, database engines, and security policy systems. Modern tools like Datomic and several static analyzers use Datalog-style reasoning under the hood.

SQL is another descendant in spirit, if not in lineage. When you write a SELECT statement with joins and conditions, you're describing what you want, not how to retrieve it. The query planner handles the search. Most developers use this every day without thinking of it as logic programming — but the conceptual debt to Prolog's model is real.

The pattern suggests that declarative thinking keeps escaping into mainstream tools precisely because it's the right model for a specific class of problems: any time you're specifying constraints on a solution rather than steps to reach one.

Your Next Action

Find one place in your current codebase where you've written a search loop — iterating through a collection to find something that matches multiple conditions. Rewrite the intent of that search as a set of declarative rules, even in pseudocode. Don't worry about making it run. Just practice separating "what I'm looking for" from "how I'm looking for it."

That separation is what Prolog spent fifty years teaching. Once you can see it clearly, you'll start noticing where your existing tools already support it — and where they're making you do work the engine could do instead.