Lesson 8: When Notation Becomes the Language

Ken Iverson had a complaint. It's 1962, and most programming languages, in his view, were "decidedly inferior to mathematical notation" as tools for thought. Mathematicians had spent centuries refining notation precisely because good symbols make ideas visible — they compress, they suggest relationships, they let you see patterns you'd miss in prose. Why should programming be different?

So Iverson built APL. And he was right about the notation problem. He was also, depending on your tolerance for glyphs like ⍋⌽⍉, either a genius or a monster.

Both things are true. That's what makes APL worth studying.

The Original Problem: Programming Languages Couldn't Think

Iverson's 1979 Turing Award lecture, Notation as a Tool for Thought, laid out the thesis clearly: notation isn't just a way to write down ideas you already have. Good notation actively shapes what you can think. A mathematician working with matrix notation can see associativity and distributivity in a way that someone working through nested loops cannot.

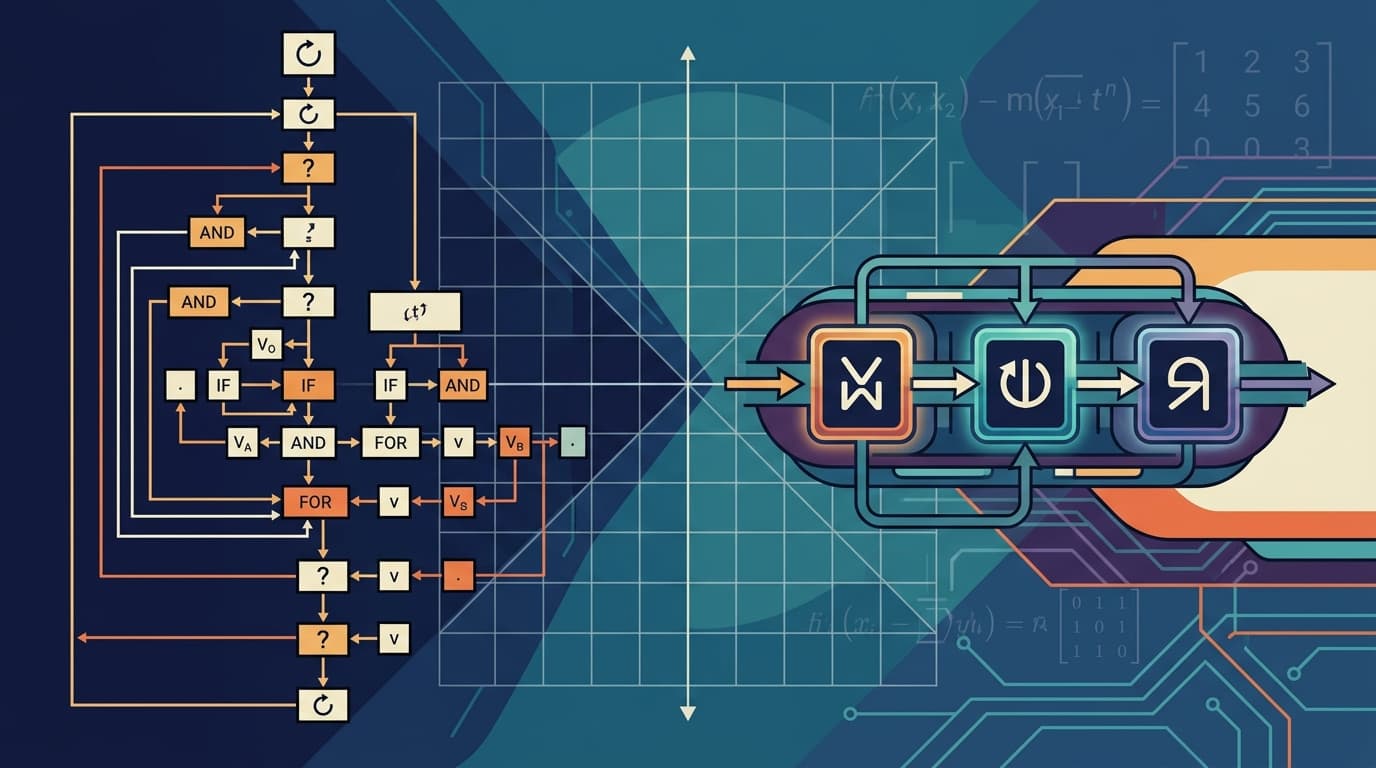

His insight about array programming was that most operations programmers write — iterating over collections, transforming data, applying functions uniformly — are fundamentally operations on aggregates, not on individual values. The loop is an implementation detail, not the idea. APL was designed to express the idea directly: operations apply to entire arrays at once, without explicit iteration.

This wasn't just syntactic sugar. It was a different model of computation. When you write +/ in APL to sum an array, you're not describing how to sum it — you're stating what you want. The language handles the rest.

The payoff is real. Array programming one-liners routinely express what would take several pages of object-oriented code. That's not an exaggeration for effect; it reflects a genuine compression of intent.

The Design Decision That Cut Both Ways

Here's where Iverson made a choice that still divides programmers: he used a custom symbol set. Not ASCII characters repurposed awkwardly, but purpose-built glyphs — ⍋ for grade-up, ⌽ for reverse, ⍉ for transpose — each one a visual shorthand for a specific array operation.

The logic was sound. If notation shapes thought, then precise notation matters. Overloading + and / and * with multiple meanings creates ambiguity. Dedicated symbols are unambiguous. Each glyph means exactly one thing.

The problem is that "unambiguous" and "learnable" are different properties. A page of APL looks, to the uninitiated, like someone fell asleep on a keyboard that had been replaced with a mathematics textbook. The symbols require a separate learning investment before you can read a single line of code. You're not learning a language; you're learning an alphabet first.

This is the core tension Iverson identified himself: the difficulty of describing a notation is different from the difficulty of mastering its implications. APL's symbols are learnable. What they suggest — the combinatorial possibilities of composing array primitives — takes much longer to internalize.

What APL's Philosophy Actually Demands

John Earnest, who works extensively with K (a language that fuses APL sensibilities with Lisp ideas), put the underlying philosophy precisely in a recent interview: APL-family languages pursue "programming without abstractions." You write the program directly in terms of the language's primitives. The ideal isn't to build up layers of named concepts — it's to express the solution as a direct composition of fundamental operations.

This is philosophically opposite to how most programmers are trained. We learn to decompose problems into named abstractions, build hierarchies, hide complexity behind interfaces. APL says: if your primitives are powerful enough, you don't need the hierarchy. The solution is the composition.

Earnest's analogy is sharp: in the novel The Mote in God's Eye, the engineer caste never builds a generic chair — they build the precise chair one person needs at this exact moment. APL code reuse works similarly. Instead of importing a library and trusting its encapsulation, you take a snippet and reshape it for your exact need.

That's a coherent philosophy. It's also one that requires you to hold the entire primitive vocabulary in your head before it becomes productive.

The Lesson for Every Programmer

APL didn't fail because its symbols were ugly. It succeeded — NumPy, MATLAB, R, Julia, and pandas all carry its DNA. The idea that operations should apply to whole arrays, not individual elements, is now mainstream.

What APL teaches is that notation and learnability are genuinely in tension, and that tension doesn't resolve itself. Every language designer faces it. Haskell's type operators, Rust's lifetime annotations, Clojure's threading macros — each is a bet that expressive precision is worth the upfront investment.

The question worth sitting with: in your own code, where are you writing loops when you should be thinking in aggregates? APL's symbols may be impenetrable, but the underlying question — what is the actual shape of this data transformation? — is one every programmer should be asking.

Next action: Find one place in your current codebase where you're iterating explicitly over a collection. Ask whether the operation has a name — map, filter, reduce, zip. If it does, the loop is hiding the idea. Name it.