The Headline Number

"Black voters now participate in elections at similar rates as the rest of the electorate, even turning out at higher rates than white voters in two of the five most recent Presidential elections nationwide and in Louisiana." — Justice Samuel Alito, majority opinion, Louisiana v. Callais, May 2026

The Audit: The Denominator Was Doing All the Work

I've written about this case twice already — first on the voter turnout claim circulating in the broader debate, then on the specific denominator problem embedded in how "eligible voters" gets defined. This week, the Supreme Court issued its actual ruling, and Justice Alito put the number directly into the majority opinion. So now we have something more consequential than a viral tweet: a methodological choice baked into binding constitutional law.

Let's trace it.

Alito's claim came almost verbatim from a Department of Justice amicus brief. The DoJ calculated Black and white voter turnout in Louisiana as a share of each group's total population over age 18. The Guardian's analysis found that this approach — using the raw over-18 population as the denominator — is not the standard methodology among election researchers, and for a straightforward reason: the over-18 population includes non-citizens, people with felony convictions, and others who are legally barred from voting.

This is not a minor technical quibble. It is the entire argument.

When you use total adult population as your denominator, you're measuring something like "votes cast per adult resident." When you use citizen voting-age population (CVAP) or voter-eligible population (VEP) — the standard approaches — you're measuring "votes cast per person who could legally vote." These produce different numbers. The question is which one you should use when you're making a claim about whether a group is participating in democracy at equal rates.

The answer is obvious. If you want to know whether Black voters in Louisiana are turning out at rates comparable to white voters, you need to compare people who are actually eligible to vote. Diluting the Black turnout rate by including non-eligible residents in the denominator — if that population is distributed unevenly across racial groups — will systematically distort the comparison.

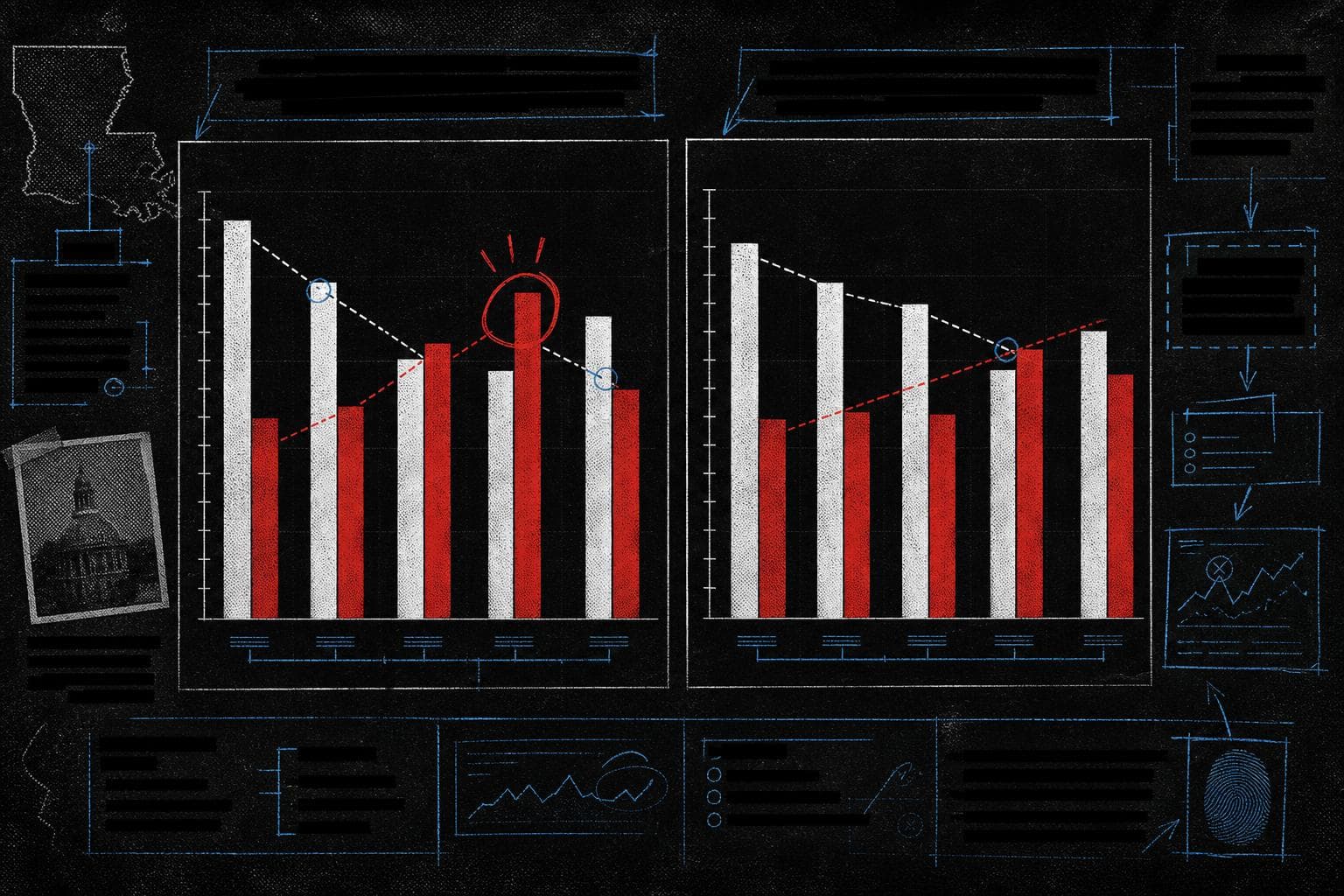

The Guardian ran the numbers using CVAP. The result: under the standard methodology, Black voter turnout in Louisiana exceeded white voter turnout in exactly one of the five most recent presidential elections (2012), not two. The DoJ's unusual denominator choice is what generates the second data point — 2016 — that Alito needed to write "two of the five."

One election versus two elections. It sounds like a rounding dispute. It isn't. Alito's argument is that the kind of structural discrimination that once made the Voting Rights Act necessary has been substantially remedied — that Black voters now participate at comparable or superior rates. That argument requires the data to show a pattern, not an outlier. One election where Black turnout exceeded white turnout is consistent with a story about Obama-era mobilization. Two elections starts to look like a trend. The methodology choice is what converts the single data point into the pattern the opinion needs.

The forensic question here isn't whether Alito was lying. He cited a DoJ brief, and the brief used a real dataset. The question is why the DoJ chose an unusual denominator, whether anyone flagged it before it was cited in a Supreme Court opinion, and whether the opinion would have read differently if the standard methodology had been applied. The Guardian's analysis suggests the answer to that last question is yes.

There's a secondary issue worth naming. The claim that Black voter turnout "exceeded" white voter turnout — even under the DoJ's preferred methodology — is being used to argue that the conditions requiring Section 2 protection no longer exist. But turnout is one dimension of electoral participation. It doesn't capture voter registration barriers, polling place access, candidate viability, or the downstream effects of district maps — which is precisely what the Louisiana case was about. Using turnout parity as evidence that structural discrimination has ended is like using attendance figures to argue a concert venue has no accessibility problems. People who got in showed up. That's not the whole question.

Verdict: Misleading. The specific claim — "two of the five most recent Presidential elections" in Louisiana — depends on a non-standard denominator that election researchers don't prefer for measuring statewide turnout. Under the standard methodology, the number is one, not two. The broader argument that turnout data resolves questions about structural electoral discrimination conflates one metric with a much larger evidentiary question.

The Methodology Laundering Problem

There's a pattern worth naming that goes beyond this case. Call it methodology laundering: a non-standard analytical choice produces a more favorable result, that result gets cited in an authoritative document (a brief, a report, a regulatory filing), and the authoritative document then gets cited as though it were the primary source. By the time the number appears in a Supreme Court opinion, the original methodological choice is three steps removed and invisible to most readers.

The DoJ brief cited a dataset. Alito cited the DoJ brief. News coverage cited Alito's opinion. At each step, the number gains authority and loses context. Nobody in that chain fabricated anything. But the denominator choice — the decision to use total adult population rather than citizen voting-age population — was made at step one, and it determined the conclusion.

This is why "the data is real" is not a sufficient defense of a statistical claim. The data was real in the petrol chart that got the price gap wrong by a factor of four. The data was real in the 98.2% number that turned out to be a trick. The data is real here. What matters is whether the methodology matches the question being asked. In this case, it doesn't.

The Mifepristone Parallel Is Not a Coincidence

This week also brought a nearly identical structure in a completely different policy domain. Republican lawmakers cited a figure — "1 in 10 women who take mifepristone end up in the emergency room" — in the context of ongoing Supreme Court litigation over medication abortion access. FactCheck.org traced the number to a 2025 report from the Ethics and Public Policy Center, a conservative nonprofit that opposes abortion, which analyzed health insurance claims data from an undisclosed source.

The methodological problem: the EPPC report counted "adverse events" within 45 days of mifepristone use — but adverse events are health issues that arise after taking a drug, not events caused by the drug. A woman who takes mifepristone and then visits an emergency room for an unrelated reason within 45 days gets counted. The report also used an undisclosed data source, which means independent researchers cannot replicate or audit the methodology. An amicus brief from 360 reproductive health researchers filed with the Supreme Court called the report "riddled with methodological flaws."

I covered the "1 in 10" number in the May 13 issue. What's worth adding here is the structural parallel to the Louisiana turnout case: in both instances, a non-peer-reviewed document with a non-standard methodology produced a number that was then cited in Supreme Court proceedings as though it were established fact. In both cases, the number is technically derived from real data. In both cases, the methodology choice is what generates the politically useful result. In both cases, the laundering happened through an authoritative intermediary — a DoJ brief, a congressional statement — that stripped the methodological context before the number reached its final destination.

The pattern is not partisan. It's procedural. And it's worth watching for wherever high-stakes litigation intersects with contested empirical claims.

By the Numbers

"Unemployment has risen every month that Labour have been in Government" — the UK Conservative Party's "Alternative King's Speech" repeated this claim, which Full Fact found to be incorrect: ONS figures show unemployment decreased by 89,000 between January and February 2026, and several smaller month-to-month decreases occurred during Labour's tenure. The overall trend since July 2024 is upward, but "every month" is a different claim than "on net" — and the Conservatives appear to have written the document before the most recent data dropped.

"More Americans doubt vaccine safety than trust it" — a Politico headline from late April that spread rapidly, based on a poll question that asked respondents to choose between two statements, one of which bundled "the science is up for debate" with "it is damaging to enforce vaccine uptake." A STAT News analysis found the question design conflated skepticism about vaccine science with opposition to mandates — two distinct positions — making it impossible to isolate actual vaccine skepticism from the results. The headline treated a question about mandates as evidence about scientific trust.

Community notes on X reduce the spread of flagged posts — a Nature Communications study found that community-based fact-checking is causally associated with reduced spread of misleading content on X. The finding is genuinely useful. What the available summary doesn't tell us: sample size, the time window studied, or whether the effect size is large enough to matter at platform scale. "Reduces spread" is doing a lot of work until we see the magnitude. File under: promising, not proven.

The through-line this week is the same as most weeks. The numbers are real. The denominators are chosen. And the choice of denominator is always, always the argument.