Your pediatrician says no screens before two. The AAP has a chart. Every parenting article you've read treats this as settled science. And there is real research here — longitudinal studies, large cohorts, peer-reviewed findings in JAMA Pediatrics. The problem isn't that the evidence doesn't exist. The problem is what gets lost between the study and the headline.

Let's actually look at what the research measured.

What the Studies Found (And What They Didn't)

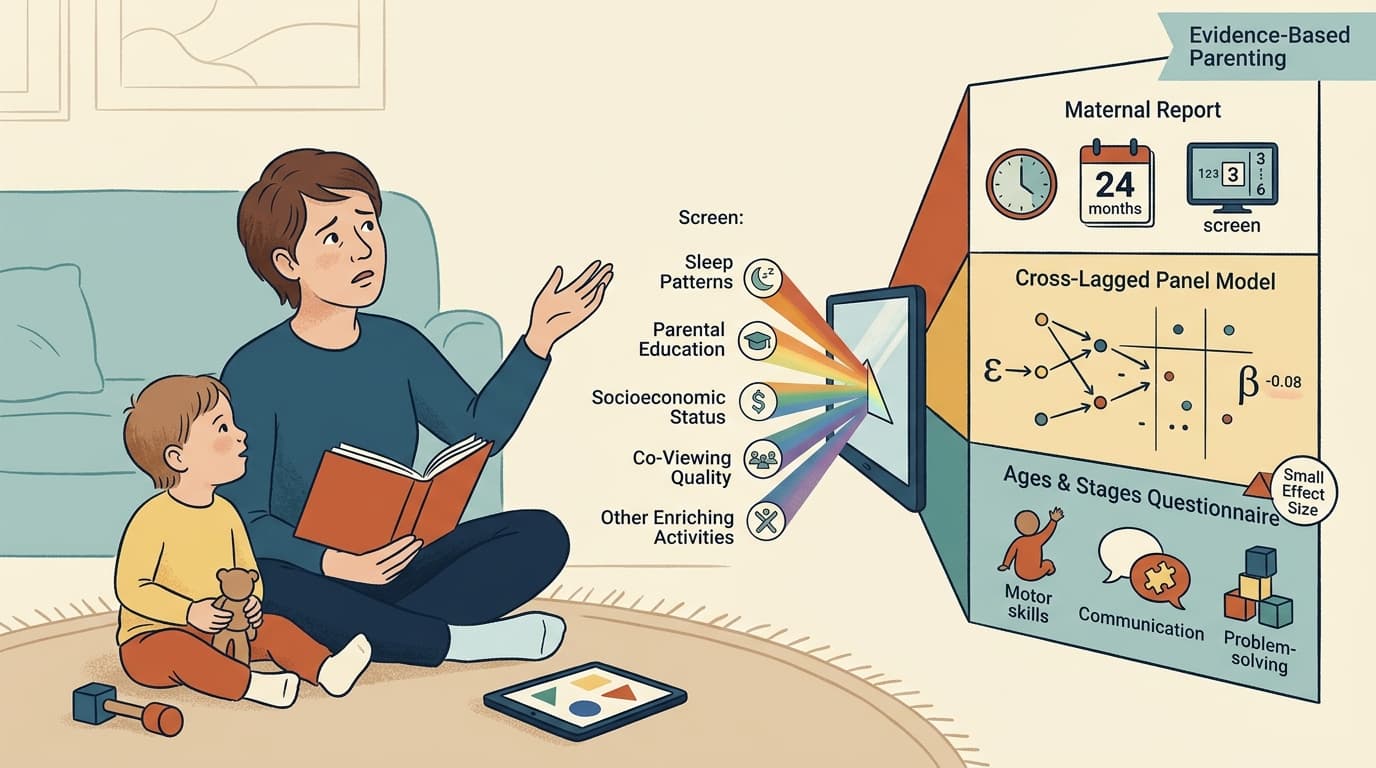

The most-cited longitudinal work on this question comes from a 2019 JAMA Pediatrics study that followed 2,441 mother-child pairs in Calgary, tracking screen time and developmental outcomes at 24, 36, and 60 months. The researchers used a cross-lagged panel model — a design that tries to tease apart directionality — and found that higher screen time at 24 and 36 months was associated with lower scores on developmental screening tests at 36 and 60 months, respectively. The effect sizes were small (β = −0.08 and −0.06), but statistically significant after controlling for stable between-person differences.

That's a real finding. It's also a carefully hedged one. The outcome measure was the Ages and Stages Questionnaire — a screening tool, not a diagnostic instrument. The exposure data came from maternal report, which introduces recall bias. And the effect sizes, while statistically significant in a sample of 2,441, are modest enough that their practical meaning for any individual child is genuinely unclear.

A more recent study published in JAMA Pediatrics found that greater screen time at age 1 was associated with developmental delays in communication and problem-solving at ages 2 and 4. Again — real signal, real longitudinal design. But "associated with" is doing a lot of work in that sentence. The cross-lagged design in the Calgary study was specifically built to address the directionality problem: are screens causing developmental differences, or are parents of children with developmental challenges using screens more as a coping tool? The honest answer is that both are probably true to some degree, and separating them is genuinely hard.

The Compliance Gap Nobody Talks About

Here's where the research gets interesting in a different way. A 2022 systematic review and meta-analysis in JAMA Pediatrics — drawing on 63 studies and over 89,000 participants — looked at how many children under five are actually meeting the guidelines that pediatricians recommend. Only about 25% of children under age 2 met the "no screen time" guideline, and only about 36% of children aged 2 to 5 met the "one hour per day" recommendation.

That means roughly three-quarters of toddlers are exceeding the under-2 guideline, and nearly two-thirds of preschoolers are exceeding the 2-to-5 guideline. Globally. Across dozens of studies.

This matters for how we interpret the developmental research. If the vast majority of children are exceeding guidelines, and we're not seeing a corresponding population-level collapse in developmental outcomes, that's worth sitting with. It doesn't mean the associations aren't real. It means the effect sizes are probably modest enough that they don't translate into dramatic individual-level harm for most children — which is consistent with what the studies actually report.

What the Research Can't Tell You

A 2025 editorial in the Korean journal Clinical and Experimental Pediatrics reviewing screen time and neurodevelopment in preschoolers flags a concern that runs through most of this literature: the research has gotten better at documenting associations, but the mechanisms remain underspecified. Is it the screen time itself? The displacement of other activities — physical play, parent-child conversation, sleep? The content being consumed? The context (co-viewing with a parent versus solo passive viewing)?

These distinctions matter enormously for practical guidance, and most studies don't have the granularity to answer them. The Calgary study measured "total hours per week" of screen exposure. That's a blunt instrument. An hour of a parent and toddler watching and talking about a nature documentary is probably not equivalent to an hour of a toddler alone with a tablet running autoplay YouTube.

The research also skews heavily toward passive screen consumption — television and video — because that's what was measurable when most of these studies were designed. The explosion of interactive apps, video calls with grandparents, and educational platforms has outpaced the longitudinal research. We're mostly drawing conclusions from TV-era data and applying them to a very different media environment.

What to Actually Do With This

The data here is honestly pretty mixed — not in the sense that it contradicts itself, but in the sense that it supports modest associations while leaving the most important practical questions underspecified.

A few things the research does support clearly: the associations between heavy screen time and developmental screening scores are real, even if small. The directionality evidence suggests screens aren't just a symptom of developmental challenges — they may contribute to them, at least at high doses in early toddlerhood. And displacement matters: time in front of a screen is time not spent in conversation, physical play, or sleep, all of which have stronger developmental evidence behind them.

What the research doesn't support: treating any screen exposure before age two as categorically harmful, or assuming that a child who watches an hour of television daily is on a developmental trajectory meaningfully different from one who watches none. The effect sizes don't justify that level of alarm.

The most defensible takeaway isn't a minute count. It's a displacement question: what is screen time replacing in your child's day, and is that trade-off worth it? On a long car trip or a sick day, probably yes. As a default babysitter for hours every morning, the research suggests there's more reason for concern — not because screens are toxic, but because the things they crowd out genuinely matter.

That's a less satisfying answer than a bright-line rule. It's also what the data actually shows.