There's a particular kind of design system meeting that happens at growth-stage companies. Someone has just joined from a larger org — maybe they came from a team that shipped a proper three-tier token system — and they're proposing that the team adopt semantic tokens layered over primitive tokens, with component-level tokens as the third tier. The Figma variables are already mapped. The naming convention is documented. It looks rigorous.

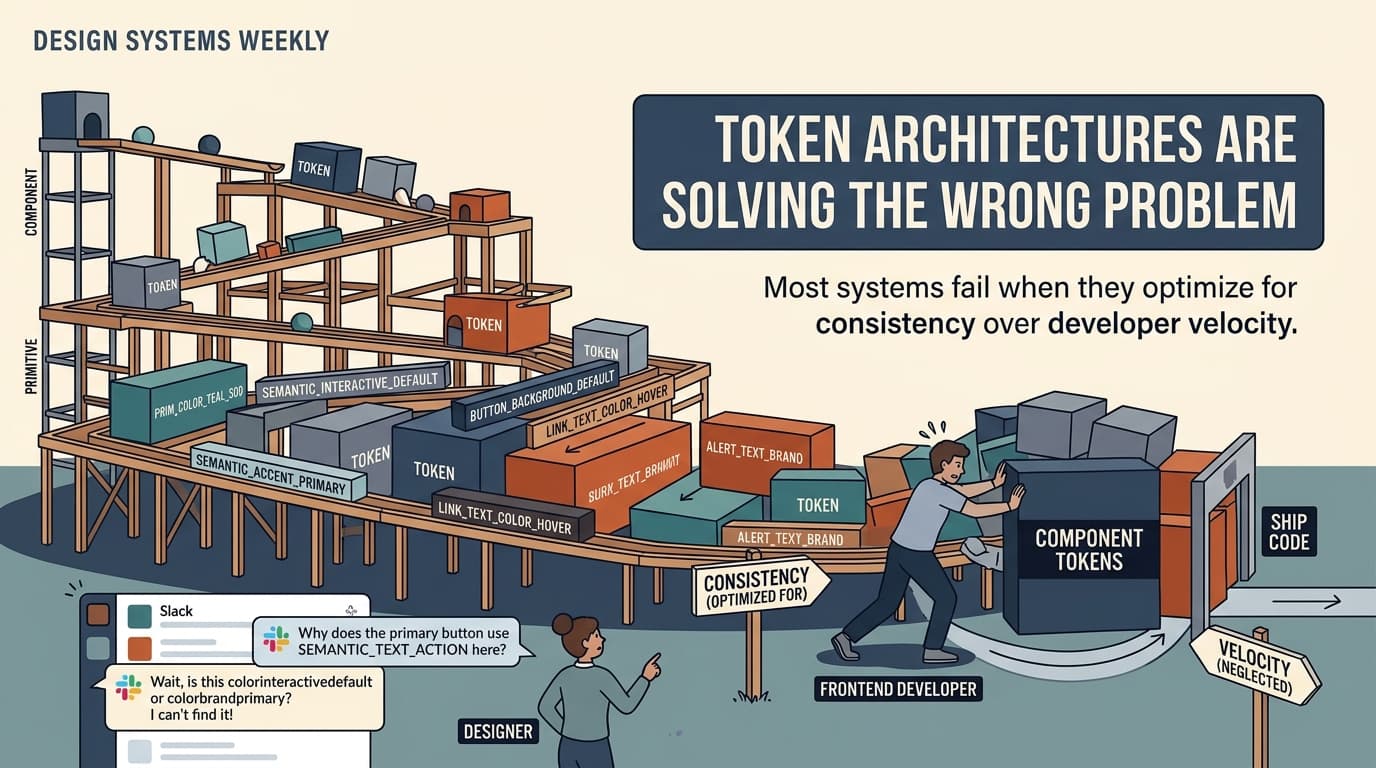

Six months later, the system has 400 tokens, three developers who actively route around it, and a Slack thread from last quarter asking why the primary button uses --color-interactive-default in one component and --color-brand-primary in another. Nobody remembers. The person who built it has moved on.

This is the failure mode that token architectures almost universally produce — not because tokens are a bad idea, but because the architecture gets optimized for a problem that most teams don't actually have.

Setup: What Token Architectures Are Actually Trying to Do

The canonical argument for a layered token system goes like this: primitive tokens encode raw values (--blue-500: #3B82F6), semantic tokens encode intent (--color-interactive-default: var(--blue-500)), and component tokens encode context (--button-background: var(--color-interactive-default)). Each layer insulates the layers above it from change. Swap out your brand color at the primitive level and the change propagates everywhere. Theming becomes a matter of overriding semantic tokens. The system is composable, scalable, and self-documenting.

This is a coherent architecture. It's also solving a problem that most startups won't encounter for years, if ever.

The actual problem most teams have is simpler and more immediate: they need developers to stop hardcoding hex values, they need Figma and code to stay roughly in sync, and they need new components to feel visually consistent without requiring a design review for every spacing decision. A flat list of 40 well-named tokens handles all three of those problems. The three-tier architecture handles them too — but it also introduces a layer of indirection that creates its own class of problems.

The indirection is the issue. When a developer reaches for a token, they're making a decision about intent. Should this background be --color-surface-default or --color-background-primary? If those tokens currently resolve to the same value, the distinction is invisible in the browser — but it's a semantic commitment that will matter when you retheme. Except most startups don't retheme. They rebrand, which is a different operation that usually requires touching components anyway. The semantic layer was built to enable a workflow that the team doesn't use.

As one practitioner put it, tokens describe what a decision is, not why it was made. A three-tier token architecture creates the appearance of documented intent — the naming convention implies a rationale — but the rationale itself is never captured. So you end up with a system that looks self-explanatory and isn't.

Mechanism: How the Architecture Generates Debt

The debt accumulates in a specific sequence. It's worth tracing it carefully because each step feels reasonable in isolation.

Step one: the naming convention ships without decision records. The team adopts semantic token names — --color-interactive-default, --color-interactive-hover, --color-interactive-disabled — and maps them to primitives. The mapping makes sense at the time. The person who made it understood the intent. But as the same practitioner documents, the why behind those mappings doesn't live anywhere in the system. It lives in the head of whoever was in the room.

Step two: the system grows faster than the naming convention scales. New components need tokens. The existing semantic layer doesn't quite cover the new cases — there's no token for a destructive interactive state in a surface context, or the existing --color-feedback-error doesn't work at the opacity the design calls for. Someone adds a new token. Then another. The naming convention starts to bend. Exceptions accumulate.

Step three: developers start pattern-matching instead of reasoning. When the token system is large and the naming convention is ambiguous, developers stop trying to understand the semantic intent and start copying from nearby components. The button uses --color-interactive-default, so the link probably should too. The card background uses --color-surface-raised, so the modal probably should too. This works until it doesn't — until a theming decision or a design update reveals that two components that looked identical were using different tokens that happened to resolve to the same value.

Step four: the system forks. Some developers stop using the token system for new work and start using Tailwind utility classes directly, or hardcoding values, or building component-local CSS variables that don't connect to the global system. This isn't laziness — it's a rational response to a system where the cost of doing things correctly (understanding the semantic intent, finding the right token, verifying it resolves correctly) exceeds the cost of doing things locally. The system becomes a partial constraint rather than