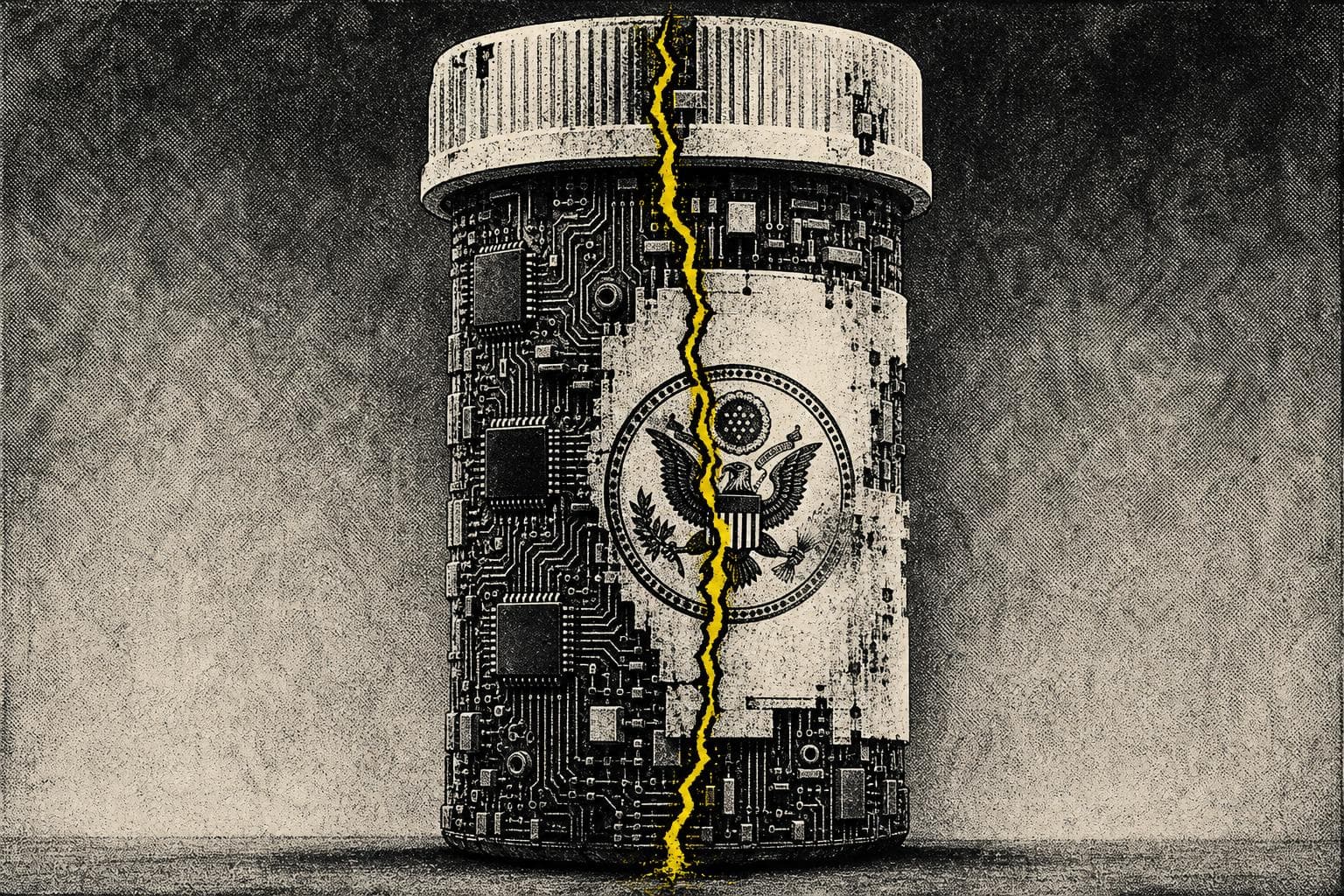

The conventional wisdom on AI regulation has been building for years, and it finally has a policy hook: the Trump administration is reportedly considering a pre-release vetting process for advanced AI models — something one official described as approving them "just like an FDA drug." Cue the chorus of approval from the usual quarters. Finally, someone is taking this seriously. Finally, guardrails.

Here's the problem: the people cheering loudest for AI pre-approval have never seriously reckoned with what the FDA model actually costs.

The FDA Analogy Is a Warning, Not a Template

Kevin Hassett, director of the National Economic Council, floated the FDA comparison as though it were self-evidently reassuring. It isn't. As Neil Chilson and Adam Thierer argue, the FDA's approval process has a documented body count. Beta blockers — now a standard treatment for hypertension — were available in Europe years before the FDA cleared them for the same uses in the United States. Experts have estimated that delays like this cost thousands of lives per year. The quest for perfect safety, as they put it, has a high body count.

That's not an argument against all safety review. It's an argument against treating regulatory delay as inherently neutral. Delay has costs. Blocked access has costs. When you slow down a technology that could help people, the harm from that slowdown is real — it's just invisible, distributed, and easy to ignore.

The media consensus on AI regulation almost never accounts for this. The framing is always: what are the risks of moving too fast? The question that doesn't get asked: what are the risks of moving too slow?

Informal Power Is Worse Than Formal Rules

The more immediate problem isn't hypothetical. It's already happening — and it's messier than any formal regulatory regime would be.

According to reporting at Don't Worry About the Vase, the White House has already ordered Anthropic not to expand access to a model called Mythos as part of a project called Glasswing. No formal process. No published criteria. No legal authority clearly established. The White House said no, and Anthropic complied — because what else are you going to do?

This is the part the "regulate AI now" crowd should find alarming, and mostly doesn't. Informal, ad-hoc government control over which companies can deploy which models to which customers is not a safety framework. It's a power structure. It favors the connected. It can't be appealed. It enables exactly the kind of corruption and insider advantage that formal regulatory processes, for all their flaws, at least nominally constrain.

As the same analysis notes: arbitrary and informal government decision-making can be even worse than formal regulatory regimes. The problem isn't just that the White House might make bad calls. It's that there's no mechanism to know what criteria they're using, no way to plan around them, and no accountability when they get it wrong.

The White House Doesn't Know What It Wants — And That's the Tell

Watch the internal contradiction play out in real time. An administration official says AI should be approved "just like an FDA drug." Then the White House distances itself, with a senior official insisting they want "partnership" with companies, not "government regulation." Then chief of staff Susie Wiles walks back the FDA framing while calling Trump "the most forward-leaning president on innovation in American history."

Three different signals in four days. What this reveals isn't a policy — it's a negotiation happening in public, with the press serving as pressure. And the press, predictably, is pushing in one direction: toward more oversight, more process, more control. The coverage treats "considering regulation" as responsible and "backing away from regulation" as capitulating to industry.

That framing does real work. It makes the default assumption that regulatory intervention is the cautious, serious position — and skepticism about it is reckless. But the actual cautious position, given how little anyone in government demonstrably understands about frontier AI systems, might be: don't build a veto infrastructure before you know what you're vetoing.

What Responsible Skepticism Actually Looks Like

None of this means AI development should be consequence-free or that powerful models should face zero scrutiny. The argument isn't "no oversight ever." It's that the rush to install a pre-approval regime — driven by anxiety, media pressure, and the institutional comfort of doing something — is likely to produce a system that serves bureaucratic interests more than public ones.

The tell is always the same: when the proposed solution is "create a process," ask who controls the process, what criteria they use, and who gets hurt when the process moves slowly. Those questions are conspicuously absent from most AI regulation coverage.

Watch for whether the rumored executive order establishing an AI working group actually materializes — and if it does, whether it comes with published criteria or just discretionary authority. The difference between those two outcomes is the difference between a safety framework and a power grab dressed in safety language.