For most of its life on Mars, Perseverance navigated the way sailors did before GPS: dead reckoning. Take a picture, identify a rock, calculate how far you've moved. Repeat. It works, but errors accumulate — over longer distances, positional drift can reach as much as 35 meters. On a planet where mission controllers are 20 minutes away by radio and can't course-correct in real time, 35 meters of uncertainty is a serious operational constraint.

That constraint just changed.

The Fix Is Elegant: Match What You See to What You Know

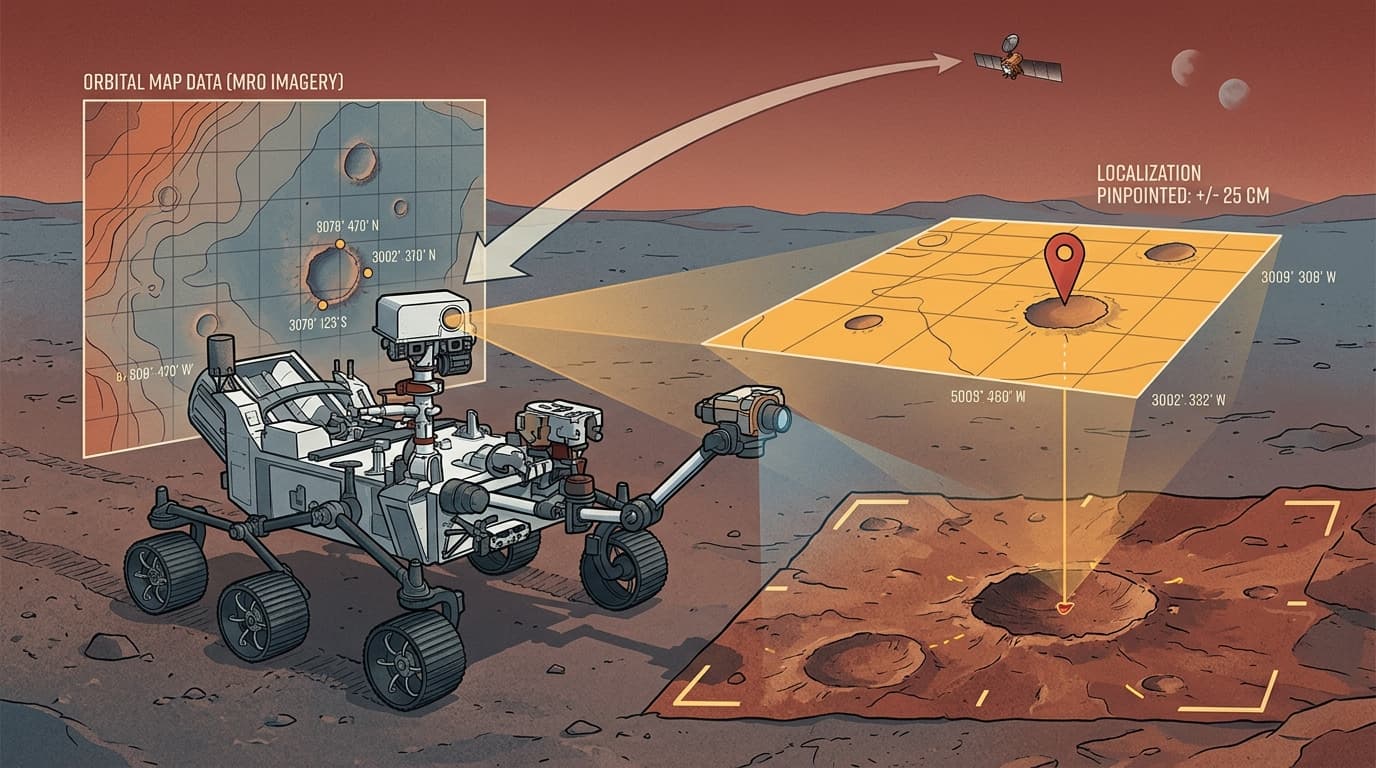

Perseverance has been upgraded with a system called Mars Global Localization, and the core idea is almost obvious in retrospect. The rover captures panoramic images with its navigation camera, then compares them against high-resolution terrain maps stored onboard — maps built from imagery taken by the Mars Reconnaissance Orbiter. The onboard processor runs a location algorithm that cross-references the two, pinpointing the rover's position to within roughly 25 centimeters. The whole computation takes about two minutes.

That's the trade-off worth examining: you're paying two minutes of compute time to eliminate 35 meters of positional error. For a rover that might drive a few hundred meters in a sol, that's an extraordinary exchange rate.

The constraint that drove this design is the communication lag. Real-time remote driving from Earth is impossible given Mars's distance, so every navigation decision the rover makes autonomously is time the mission isn't waiting on a round-trip signal. Better localization means longer autonomous drives with fewer check-ins, which means more ground covered per sol without proportionally more risk.

What "Autonomous" Actually Means Here

It's easy to hear "autonomous navigation" and picture a rover making judgment calls like a self-driving car. The reality is more constrained — and more interesting for it. The autonomy isn't about high-level mission decisions; it's about the rover knowing precisely where it is so that its existing hazard-avoidance systems can operate on accurate information.

Visual odometry, the old method, works well over short distances on predictable terrain. The problem is that Mars isn't predictable. Loose regolith, slopes, wheel slip — each introduces small errors that compound over kilometers. Perseverance has now covered approximately 40 kilometers on Mars, and at that scale, cumulative drift becomes a genuine mission risk. The Mars Global Localization system resets the error budget every time it runs, which means longer traverses stay safe rather than degrading into guesswork.

The engineering insight here is about error architecture. Visual odometry is a relative positioning system — it knows where you are relative to where you were. The new system is absolute — it knows where you are relative to the planet. Combining them gives you the best of both: continuous tracking between fixes, with hard resets to ground truth every two minutes.

The Broader Pattern This Points To

This upgrade is one data point in a larger shift. The Apollo-era model of planetary exploration was fundamentally Earth-directed: humans on the ground made decisions, uplinked commands, and waited for results. That model works, but it's rate-limited by the speed of light and the bandwidth of human attention.

What's emerging instead is a model where the spacecraft carries enough intelligence to handle the tactical layer — obstacle avoidance, precise localization, route optimization — while Earth handles the strategic layer: where to go, what to prioritize, what the science means. The communication lag that once made Mars exploration slow and cautious becomes less of a bottleneck when the rover can be trusted to execute a multi-kilometer traverse without hand-holding.

The same logic is showing up in non-rover contexts. Rhea Space Activity is testing its AutoNav system — originally developed by JPL for NASA's Deep Impact mission — on a hypersonic reentry vehicle, where GPS blackout during plasma sheath reentry creates a navigation gap that only onboard celestial navigation can fill. Different environment, same underlying problem: the vehicle needs to know where it is when external signals can't reach it.

The pattern suggests that autonomous navigation isn't a single technology — it's a design philosophy. Build the vehicle to own its own positional awareness. Let the ground focus on what only humans can do.

For Perseverance specifically, watch for how mission planners use the expanded traverse capability over the next several months. If the science return per sol increases measurably, that's the real proof of concept — not the algorithm, but what it enables.