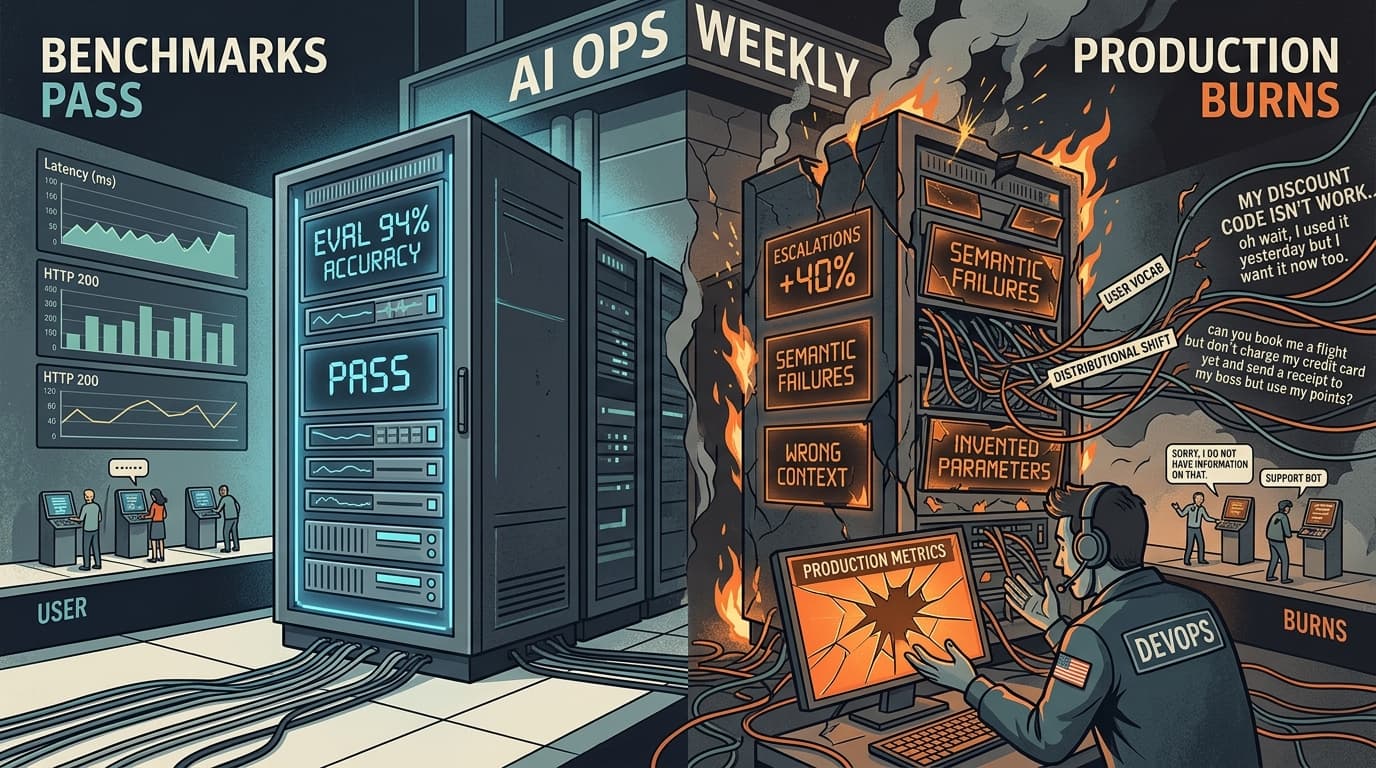

A team ships a customer support bot with 94% accuracy on their internal test suite. Two weeks into production, escalations are up 40%. The model is fluent, latency is fine, HTTP 200s everywhere. Nothing in their monitoring catches it. The failures are semantic — wrong context retrieved, confident answers to questions outside scope, tool calls with invented parameters.

This is the benchmark trap in its purest form.

The Gap Between Test Accuracy and Production Behavior

Static benchmarks measure the wrong thing. They answer "did the model produce the correct output for this input?" — which is a useful question during development and a dangerously incomplete one in production.

One AI engineer's retrospective puts it plainly: teams with 95%+ accuracy on evaluation datasets routinely see 30–40% failure rates in production. The culprit isn't model quality — it's distributional shift. Real users ask questions your QA team never imagined, combine requests in unexpected ways, and use vocabulary that sits just outside your test distribution. Static test suites don't see any of that coming.

The deeper problem is that LLM failures are often silent. The system returns HTTP 200. Latency looks normal. The response reads fluently. But the retrieved context was ignored, the tool was called with invented parameters, or the model stated something false with full confidence. Standard application monitoring — uptime, CPU, error rates — misses the semantic layer entirely.

What Production Eval Actually Requires

The shift is from static correctness to continuous behavioral monitoring. Three things have to change:

You need a failure taxonomy before you need metrics. Teams that skip this end up triaging everything as "it gave a bad answer," which makes prioritization impossible. PromptLayer's analysis of production LLM failures distinguishes between severity classes: a slightly verbose answer is a different problem from a medical chatbot providing dangerous advice. Without that taxonomy, your eval numbers are noise.

The signals that matter aren't in your logs. HTTP codes and latency tell you the infrastructure is healthy. They say nothing about whether the model ignored retrieved context, hallucinated a parameter, or confidently answered outside its scope. Useful signals live at the semantic layer: retrieval relevance scores, tool call validation, output consistency across similar inputs, and user correction patterns. These require deliberate instrumentation, not default observability tooling.

LLM-as-judge fills the gap that code-based evals can't. The Pragmatic Engineer's eval guide draws a clean line: use code-based evals for deterministic failures (did the function get called? did the output match the schema?) and LLM-as-judge for subjective quality assessment. The trap is using only one. Code-based evals miss nuanced failures; LLM judges introduce their own biases and need calibration against human labels before you trust them.

The Human Review Illusion

One of the most expensive misconceptions in production AI: "we'll catch errors with human review." That same engineer's retrospective documents a case where human reviewers missed 60% of factual errors in generated content — because the errors looked plausible. Fluency is not accuracy. Reviewers pattern-match on surface quality, not semantic correctness.

The pattern that actually works is automated evaluation catching the high-volume, detectable failures, with targeted human review focused on the edge cases your automated evals flag as uncertain. Human attention is a scarce resource; spend it where automated systems have low confidence, not as a general-purpose quality filter.

The through-line here is that production eval is a systems problem. Benchmarks are a development tool. They tell you whether the model is capable of the task under controlled conditions. They don't tell you whether your retrieval pipeline is feeding it garbage, whether your prompt degrades under real input variance, or whether your users are doing things your test suite never anticipated.

The teams that catch failures before users do have instrumented the semantic layer, built a failure taxonomy that drives prioritization, and stopped treating benchmark scores as a proxy for production health.

Eval Patterns

Error analysis before metrics. Before building an eval suite, spend time in your production logs manually reviewing failures. Hamel Husain's eval framework treats error analysis as the foundation — you can't write good evals for failure modes you haven't characterized. A week of manual review will surface patterns that no benchmark would have predicted.

Calibrate your LLM judge. If you're using a model to evaluate model outputs, treat it like any other classifier: measure its agreement rate with human labels on a held-out set before trusting it in CI. An uncalibrated judge introduces systematic blind spots that are harder to detect than no judge at all.

Reliability Notes

Log for replay, not just for debugging. PromptLayer's production failure analysis recommends logging correlation IDs, prompt template references, and retrieval document IDs rather than raw prompt text — this lets you reconstruct the reasoning path without storing PII. The goal is being able to replay a failure case exactly, not just knowing it happened.

Temperature=0 is not determinism. One production team learned this with a financial client: identical inputs with temperature=0 still produced different outputs across API calls due to floating-point variance and model version updates. If your system requires reproducible outputs, you need explicit version pinning and output caching — not just a temperature setting.

Cost Watch

Eval infrastructure has a compute bill. Running LLM-as-judge at scale means you're paying for evaluation calls on top of production calls. I'd argue the right framing is: what's the cost of a false negative? If a missed failure costs you an escalation, a refund, or a compliance incident, the eval compute is cheap. If you're running judges on low-stakes outputs, sample aggressively — 10–20% coverage on routine requests, 100% on anything touching sensitive workflows.

Prompt bloat compounds. The instinct to fix inconsistent outputs by adding more examples to the prompt is expensive and often counterproductive. Per that same engineer's retrospective, 80% of consistency failures come from the model not understanding task boundaries — when to say "I don't know" versus when to infer. More examples add tokens and noise. Cleaner task framing is cheaper and more effective.